Can AI Help Doctors Identify Patients at Risk of Suicide?

AI augments suicide risk detection through EHR analysis and workflow design.

Suicide prevention remains one of the most complex and high-stakes challenges in modern medicine. Despite decades of clinical research, traditional suicide risk assessment—largely dependent on self-reporting, clinician judgment, and episodic screening—has shown limited predictive accuracy. Against this backdrop, AI in Healthcare is emerging as a pragmatic augmentation tool rather than a replacement for clinical expertise. Increasingly, health systems are asking a critical question: can AI help doctors identify patients at risk of suicide earlier, more consistently, and at scale?

Drawing on recent clinical studies, large-scale Electronic health records (EHR) analyses, and real-world deployments, the answer is cautiously affirmative—with important caveats around ethics, workflow design, and clinical validation.

Key Healthcare AI Trends Shaping Innovation in 2026. Read more here!

Glossary of Key Technical Terms

Suicide Risk Assessment. A structured clinical process used to evaluate the likelihood that a patient may attempt or die by suicide within a defined time frame. In AI-driven systems, suicide risk assessment leverages machine learning models trained on Electronic Health Records (EHR), behavioral data, and linguistic signals to generate probabilistic risk predictions.

Area Under the Curve (AUC). A performance metric used to evaluate the accuracy of predictive models, particularly in classification tasks. In suicide risk prediction, AUC measures how well a model distinguishes between patients who will and will not attempt suicide. An AUC of 0.5 indicates random prediction, while values closer to 1.0 indicate stronger discriminative performance.

Natural Language Processing (NLP). A subfield of artificial intelligence that enables machines to analyze, interpret, and generate human language. In mental health applications, NLP models extract suicide-related signals from unstructured data such as clinician notes, therapy transcripts, patient messages, and journals.

Explainable AI (XAI). AI systems designed to provide transparent, interpretable reasoning for their predictions or decisions. In suicide prevention contexts, explainable AI highlights contributing risk factors (e.g., recent medication change, prior attempts, frequent ED visits), improving clinician trust and supporting ethical deployment.

Why Traditional Suicide Risk Assessment Falls Short

Meta-analyses spanning over 50 years of research have shown that conventional suicide risk prediction performs only slightly better than chance. One landmark review found that clinicians’ ability to predict suicide outcomes has not meaningfully improved over time, largely due to:

- Patient under-disclosure driven by stigma or fear of involuntary treatment

- Fragmented clinical data across specialties and encounters

- Static screening tools that fail to capture temporal risk dynamics

Yet paradoxically, epidemiological data consistently show that 77–83% of individuals who die by suicide had contact with a healthcare provider within the year before death, often for non-psychiatric reasons. This gap between clinical contact and missed risk signals is where AI suicide risk assessment has demonstrated its strongest potential.

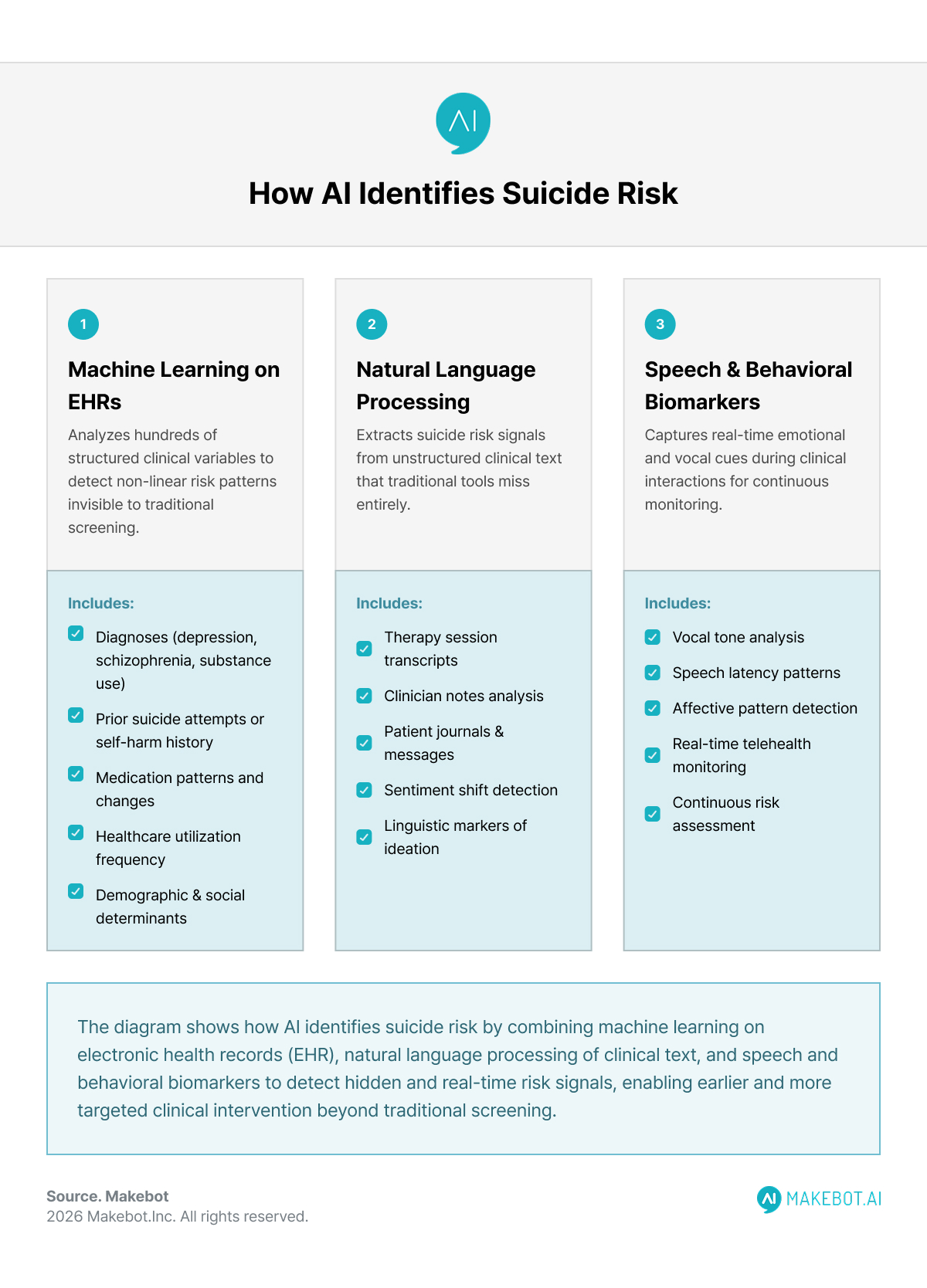

How AI Identifies Suicide Risk in Clinical Settings

Machine Learning on Electronic Health Records (EHR)

Most clinically validated suicide prediction models rely on retrospective and prospective analysis of Electronic health records (EHR). These systems ingest hundreds of routinely collected variables, including:

- Diagnoses (e.g., depression with psychosis, schizophrenia, substance use disorders)

- Prior suicide attempts or self-harm history

- Medication patterns and recent changes

- Healthcare utilization frequency (ED visits, missed follow-ups)

- Demographic and social determinants

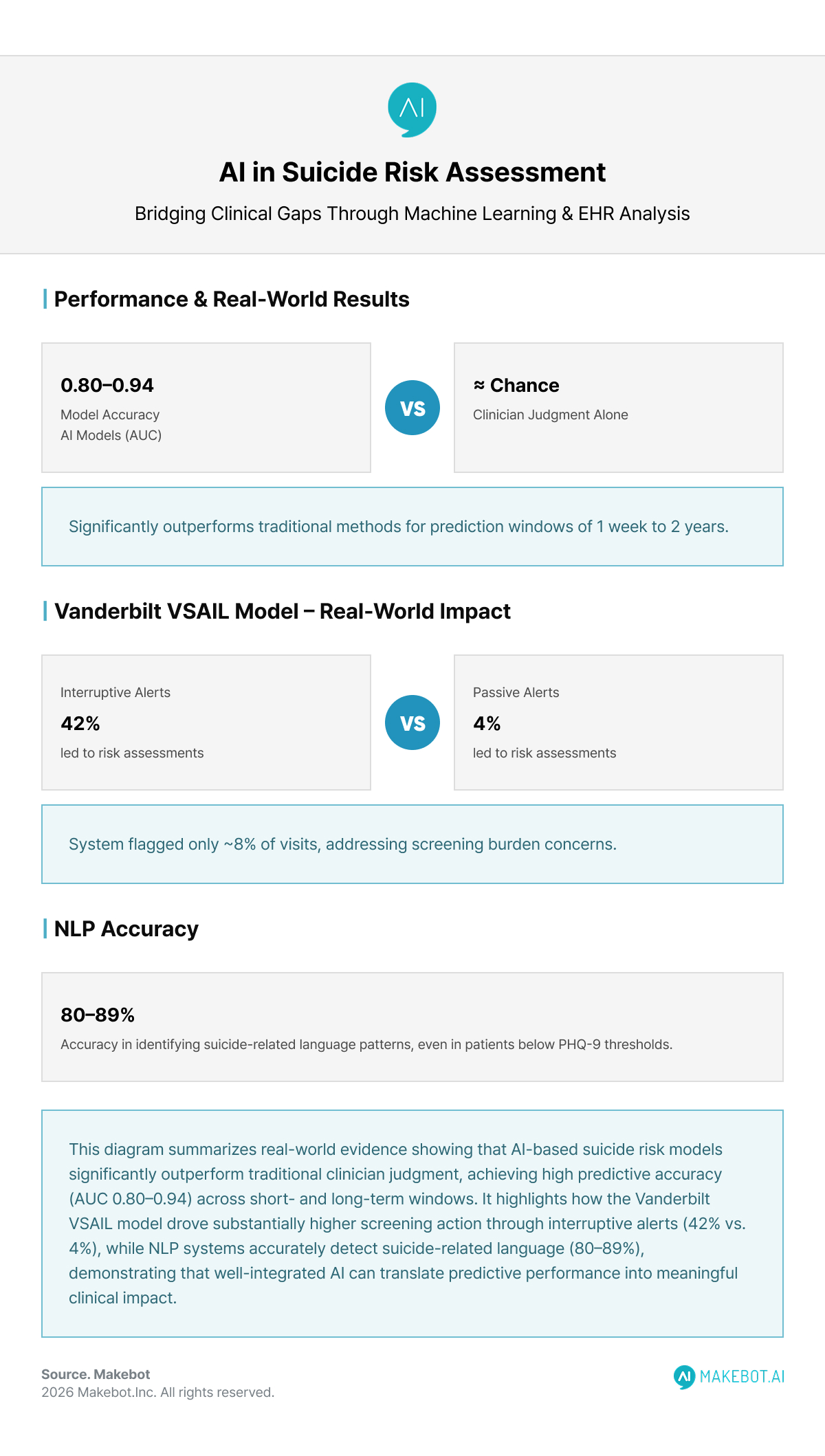

Unlike rule-based screening tools, machine-learning models detect non-linear interactions among these factors. Multiple studies have reported area under the curve (AUC) values between 0.80 and 0.94, significantly outperforming clinician judgment alone when predicting suicide attempts within windows ranging from one week to two years.

Notably, models trained on EHR data alone—without additional surveys—have shown clinically meaningful accuracy, lowering implementation barriers for health systems.

Clinical Decision Support and Workflow Integration

AI’s value is not in generating abstract risk scores, but in how those insights are operationalized within clinical workflows.

A prominent real-world example is the Vanderbilt Suicide Attempt and Ideation Likelihood (VSAIL) model. Embedded into neurology clinics, the system analyzed routine EHR data to calculate a patient’s 30-day suicide attempt risk, then surfaced this information to clinicians in two formats:

- Interruptive alerts (active pop-ups during visits)

- Passive chart indicators (risk displayed without disruption)

The results were stark: 42% of interruptive alerts led to documented suicide risk assessments, compared with just 4% for passive alerts. Importantly, the system flagged only ~8% of visits, addressing concerns around universal screening feasibility and clinician burden.

This highlights a key insight: AI for suicide prevention succeeds or fails not on model accuracy alone, but on thoughtful human-centered design.

Health System Execs Are Prioritizing AI. Read here!

Beyond EHRs: NLP, Speech, and Multimodal Signals

Natural Language Processing (NLP)

Advances in Generative AI and NLP have expanded suicide risk detection into unstructured clinical data, including:

- Therapy session transcripts

- Clinician notes

- Patient journals and secure messages

NLP-based systems have demonstrated 80–89% accuracy in identifying suicide-related language patterns, often detecting risk in patients who did not meet thresholds on tools like the PHQ-9. These models excel at capturing sentiment shifts, cognitive constriction, and linguistic markers associated with suicidal ideation.

Speech and Behavioral Biomarkers

Emerging clinical pilots integrate AI analysis of vocal tone, speech latency, and affective patterns during therapy or telehealth sessions. While still early-stage, these tools offer real-time augmentation for clinicians, particularly in high-volume or remote care environments.

Together, these approaches reflect a broader move toward AI for mental health that is continuous, multimodal, and context-aware.

Clinical Benefits: Where AI Adds Real Value

When responsibly implemented, AI-assisted suicide risk assessment offers several tangible benefits:

- Earlier identification of high-risk patients outside psychiatric settings

- Selective screening, reducing unnecessary assessments

- Decision support, not decision replacement

- Improved resource allocation, particularly in under-served populations

Critically, studies consistently emphasize that AI performs best when combined with clinician judgment, not when used in isolation.

.

Limitations, Bias, and Ethical Considerations

Despite promising results, AI-driven suicide risk assessment raises significant concerns:

False Positives and Clinical Harm

Over-prediction may lead to unnecessary hospitalization, psychological distress, or erosion of patient trust. Conversely, false negatives risk missed interventions.

Bias and Data Representativeness

Models trained on historical EHR data may encode systemic biases related to race, socioeconomic status, or access to care. Continuous auditing and subgroup validation are essential.

Explainability and Trust

Black-box models undermine clinician confidence. Health systems increasingly favor explainable AI that highlights why a patient is flagged, not just that they are.

Privacy and Consent

The use of sensitive mental health data demands robust governance, cybersecurity, and transparent patient consent frameworks—especially when integrating non-traditional data sources.

What the Evidence Really Says

Systematic reviews and large-scale studies converge on a nuanced conclusion:

- AI can outperform traditional methods in identifying suicide risk

- Clinical utility depends on workflow integration, not algorithm complexity

- Prospective validation remains limited but growing

- Ethical safeguards are as critical as technical performance

In short, AI in Healthcare offers a meaningful opportunity to close long-standing gaps in suicide prevention—but only when deployed with restraint, transparency, and clinical partnership.

Showcasing Korea’s AI Innovation: Makebot’s HybridRAG Framework Presented at SIGIR 2025 in Italy. More here!

Conclusion: Can AI Help Doctors Identify Patients at Risk of Suicide?

Yes—but not alone.

AI is best understood as a clinical force multiplier: a way to surface hidden risk signals within everyday care, prompt timely conversations, and support—but never replace—human judgment. When grounded in high-quality Electronic health records (EHR) data, aligned with clinician workflows, and governed by strong ethical frameworks, AI suicide risk assessment can meaningfully strengthen AI for suicide prevention efforts across healthcare systems.

The future of suicide prevention will not be purely human or purely algorithmic. It will be collaborative—where clinicians, data, and Generative AI work together to identify risk earlier, intervene smarter, and ultimately save lives.

This is where Makebot bridges the gap between AI suicide risk research and real-world clinical deployment. We help healthcare organizations operationalize AI for suicide prevention by delivering secure, explainable, and workflow-ready Generative AI solutions that integrate seamlessly with Electronic health records (EHR) and existing care pathways.

With industry-specific LLM agents already trusted by leading hospitals, and proven technologies like HybridRAG that improve accuracy while reducing costs, Makebot enables faster PoC-to-production—so AI in Healthcare moves from prediction to measurable clinical impact.

👉 www.makebot.ai | 📩 b2b@makebot.ai

Why Generative AI Projects Fail and How to Achieve Scalable AI Success

.jpg)

.png)

_2.png)

.jpg)