Reducing Hallucinations in Clinical LLMs Using Retrieval Augmented Generation

RAG reduces clinical LLM hallucinations through evidence-grounded design.

Clinical deployment of Large Language Models has moved from theoretical exploration to operational experimentation in radiology, pathology, clinical documentation, and decision support. Yet one barrier remains structurally persistent: hallucination.

In healthcare, hallucination is not a minor nuisance—it is a systemic risk. A single fabricated negation (“no pneumothorax”) or an invented finding can materially alter diagnosis, downstream treatment decisions, and patient outcomes. While Retrieval Augmented Generation (RAG) has emerged as a leading mitigation strategy, its effectiveness depends heavily on how retrieval and generation components are architected and integrated.

This article examines how RAG reduces hallucinations in clinical LLMs, why naïve implementations still fail, and what system-level refinements are required for production-grade clinical reliability.

Beyond the Build: Uncovering the Hidden Costs of In-House LLM Chatbot Development. Read more here!

Glossary of Technical Key Terms

Entity Probing. A structured evaluation method that tests whether a clinical LLM correctly identifies specific medical entities (e.g., diseases or findings) through controlled yes/no questions grounded in reference reports.

Multimodal Retrieval. A retrieval strategy within RAG that incorporates multiple data modalities—such as medical images and associated reports—to improve grounding and reduce hallucination risk.

Context Noise. Irrelevant, redundant, or misleading information introduced during retrieval that degrades generation quality and increases hallucination probability in Retrieval Augmented Generation systems.

Knowledge Boundary. The inherent limitation of Large Language Models wherein the model can only generate outputs based on patterns learned during training, often leading to hallucinations when operating beyond its parametric knowledge scope.

Why Hallucination Is Structurally Hard in Clinical AI

Modern LLMs are probabilistic sequence models trained to predict plausible continuations—not to guarantee factual fidelity. In clinical contexts, hallucinations manifest in several forms:

- Factual hallucination – Generating findings inconsistent with imaging or structured data.

- Faithfulness hallucination – Deviating from provided context or instructions.

- Capability-boundary hallucination – Attempting to answer beyond knowledge limits.

The review literature on retrieval-augmented systems identifies two root categories of hallucination in RAG pipelines:

- Retrieval Failure

- Data source issues

- Ambiguous or complex queries

- Suboptimal retriever granularity

- Poor retrieval strategy

- Generation Deficiency

- Context noise

- Context conflict

- Long-context degradation (“middle curse”)

- Alignment gaps

- Model capability limits

This classification is critical: hallucination is not solely a generation problem—it is a pipeline-level systems issue.

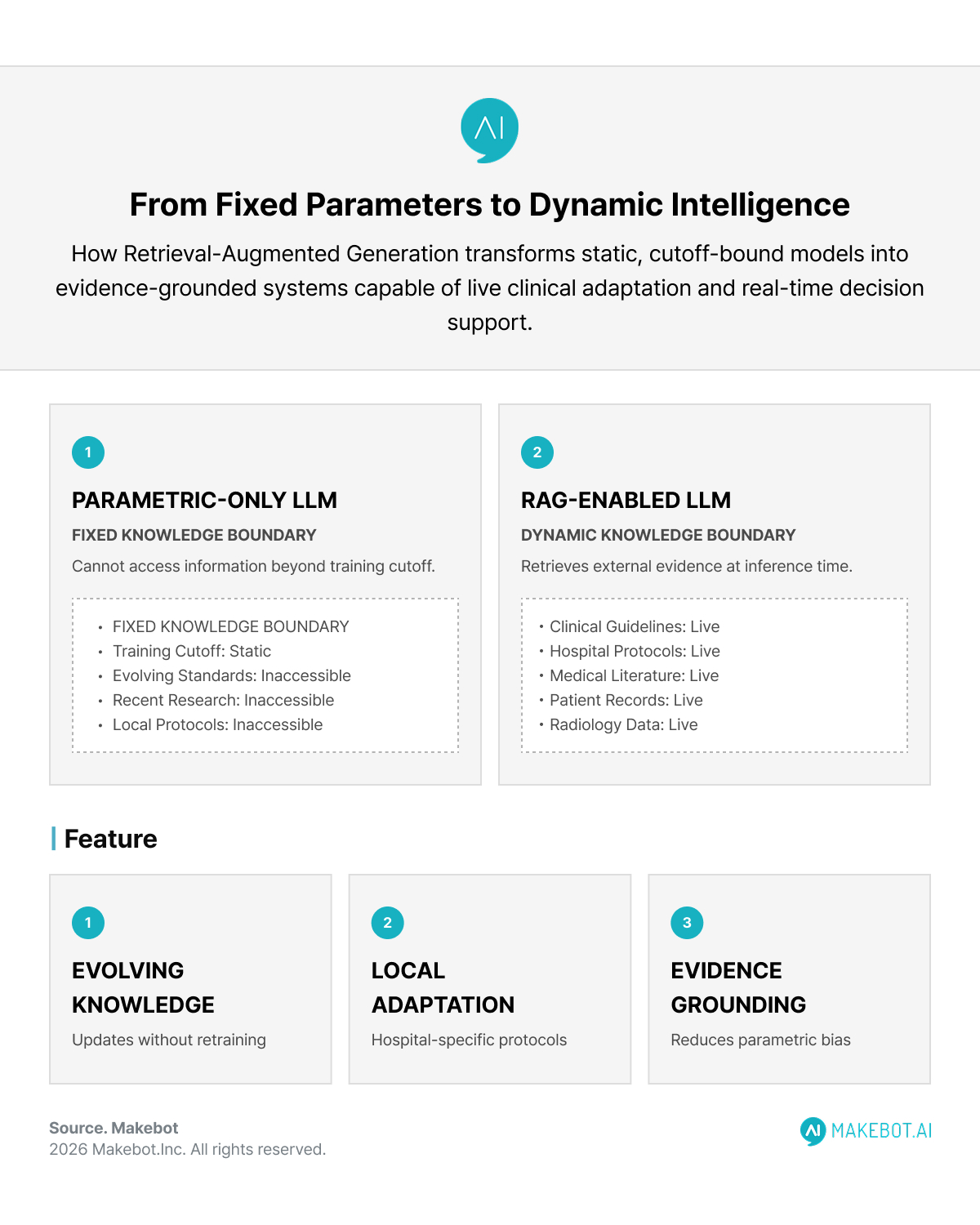

How Retrieval Augmented Generation Changes the Knowledge Boundary

The central limitation of parametric-only Large Language Models is their fixed knowledge boundary. Clinical data evolves; new guidelines emerge; local protocols differ across hospitals.

Retrieval Augmented Generation addresses this by:

- Retrieving external domain knowledge (documents, reports, images).

- Injecting retrieved evidence into the prompt context.

- Generating outputs grounded in retrieved information.

The theoretical advantage is clear: grounding reduces reliance on memorized priors and mitigates hallucination driven by parameter bias.

However, empirical evidence shows that text-only RAG is insufficient in multimodal medical settings.

Visual RAG in Clinical Imaging: A Step Forward

A key advancement comes from Visual Retrieval-Augmented Generation (V-RAG), which integrates both retrieved text and retrieved medical images into inference.

In chest X-ray report generation (MIMIC-CXR) and broader medical captioning (MultiCaRe), V-RAG demonstrated:

- Higher entity-level grounding accuracy

- Improved F1 scores in disease probing

- Reduced hallucinated entities in generated reports

Notably:

- Baseline Med-MLLM F1 (MIMIC-CXR): 0.381

- Text-based RAG (RAT/Img2Loc): 0.711

- V-RAG: 0.721

- Fine-tuned V-RAG: 0.751

The improvement extended to rare entities, which are particularly prone to hallucination due to data sparsity

Why This Matters

Text-only retrieval assumes that retrieved reports are interchangeable with the query case. Clinically, this is flawed. Two chest X-rays may share similar text descriptions but differ in subtle radiographic patterns.

V-RAG allows the model to compare:

- Query image

- Retrieved similar images

- Associated reports

This multimodal triangulation improves grounding fidelity.

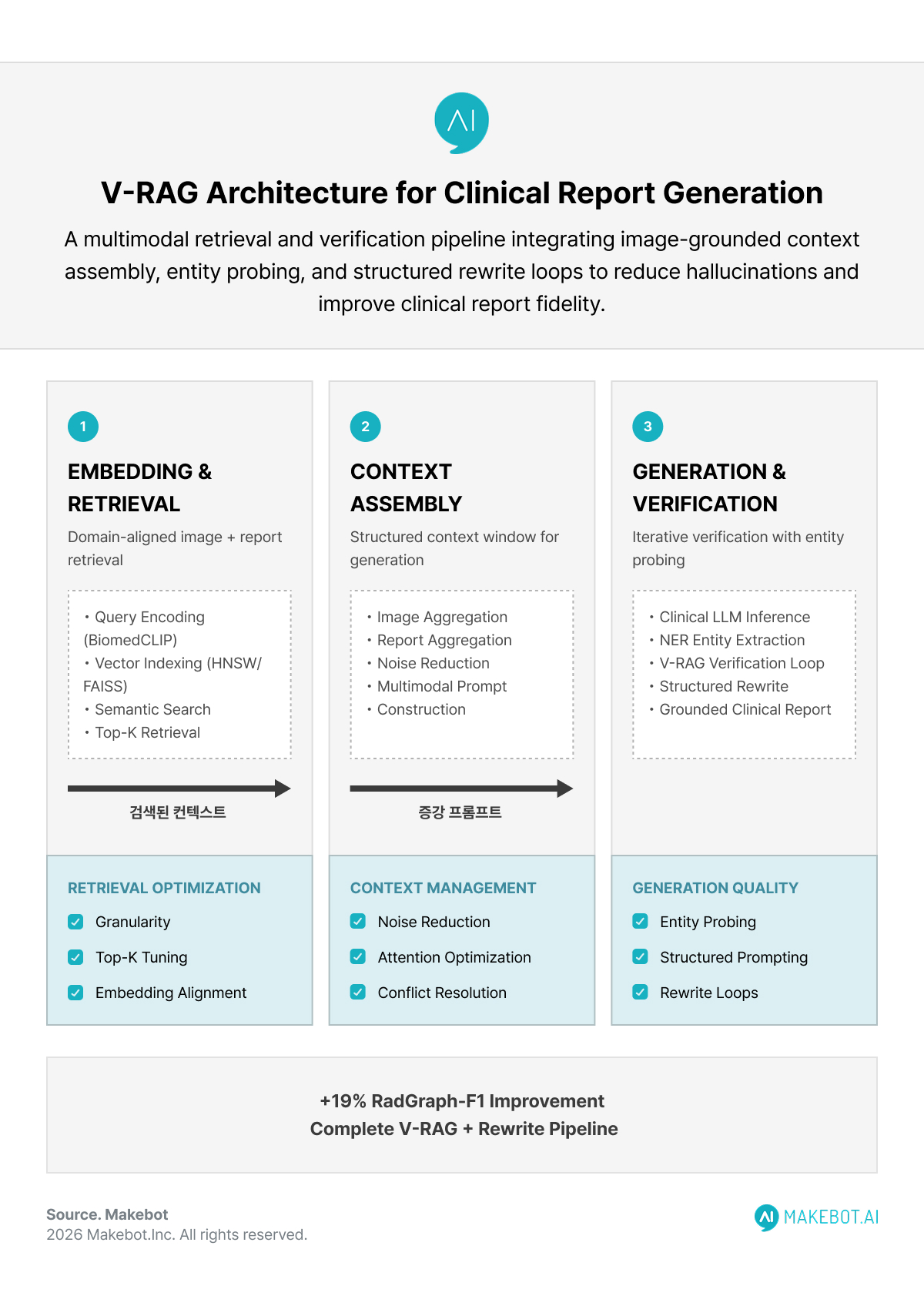

Architecture of Clinical RAG Systems

A robust clinical AI Development pipeline using Retrieval Augmented Generation typically includes:

Embedding & Retrieval Layer

- Biomedical encoders (e.g., BiomedCLIP)

- Vector storage (e.g., FAISS)

- Approximate nearest neighbor search (e.g., HNSW)

Design considerations:

- Retrieval granularity (image-level vs report-section-level)

- Top-k optimization

- Embedding alignment with domain semantics

Multimodal Context Assembly

- Retrieved images (I₁...Iₖ)

- Retrieved reports (R₁...Rₖ)

- Query image

- Structured prompt guidance

Poor assembly leads to context noise or attention dilution.

Generation Phase

- Clinical LLM (e.g., RadFM, LLaVA variants)

- Structured prompting (entity probing, yes/no constraints)

- Optional rewrite layer using a stronger text-only model

A notable downstream strategy involved:

- Generating initial report.

- Extracting entities via NER.

- Probing entities using V-RAG.

- Rewriting report using senior-review-style prompts.

This produced ~19% relative improvement in RadGraph-F1 for report quality

Entity Probing as a Hallucination Diagnostic Tool

Standard metrics like ROUGE often fail to capture clinical inaccuracies.

Entity probing offers a more clinically aligned evaluation:

- Extract disease entities.

- Ask closed-ended questions (“Does the patient have pneumothorax?”).

- Compare model response against ground truth.

Across 9,411 VQA pairs (MIMIC-CXR) and 21,653 pairs (MultiCaRe), entity-level F1 became a reliable hallucination proxy.

This method avoids lexical bias and directly measures factual correctness.

Trade-Offs in Clinical RAG Implementation

Despite its promise, Retrieval Augmented Generation introduces its own failure modes.

Retrieval Failure

- Outdated guidelines

- Biased institutional data

- Poor query formulation

- Over-reliance on semantic similarity

Low-quality retrieval propagates hallucination rather than preventing it

Context Noise

Too many retrieved documents:

- Increase entropy

- Dilute attention

- Reduce factual precision

Too few:

- Limit coverage

- Increase reliance on parametric priors

Context Conflict

If retrieved evidence conflicts with model priors, generation may:

- Favor internal knowledge

- Blend contradictory signals

- Produce hybrid hallucinations

Latency & Cost

Clinical deployments must balance:

- Retrieval frequency

- Multi-hop retrieval

- Context window constraints

- GPU memory limits

From Pilot to Production: How Enterprises Can Successfully Scale LLM Chatbots Across the Organization. More here!

Fine-Tuning for Multi-Image Reasoning

A critical limitation in mainstream Large Language Models is weak multi-image reasoning.

Three fine-tuning tasks were shown to improve V-RAG capability:

- Image-text association

- Image-focus disambiguation

- Learning from extracted similar information

Fine-tuned models demonstrated improved F1 across configurations, including single-image-trained models made V-RAG-capable

This is significant for practical AI Development: it removes dependency on specialized multi-image pretraining and makes RAG more broadly adoptable.

System-Level Strategies for Reducing Hallucination in Clinical LLMs

Beyond V-RAG, a comprehensive mitigation framework should include:

Retrieval Optimization

- Domain-specific embedding models

- Adaptive top-k strategies

- Hybrid dense + sparse retrieval

- Structured knowledge graphs

Prompt Engineering

Prompt techniques—clear instructions, role-based framing, chain-of-thought—play a measurable role in hallucination mitigation.

Detection and Post-Generation Correction

Since hallucinations remain unavoidable, detection layers are essential:

- Entity consistency checks

- Cross-model validation

- Confidence thresholding

- Rewrite loops with higher-capability models

Clinical Implications

The shift from parametric-only LLMs to retrieval-grounded architectures marks a foundational change in clinical AI systems.

Retrieval Augmented Generation:

- Extends knowledge boundaries dynamically.

- Reduces rare-entity hallucination.

- Improves structured report fidelity.

- Enables post-generation clinical correction workflows.

But it is not a silver bullet.

RAG introduces architectural complexity and new failure modes. Clinical safety requires:

- Curated retrieval corpora

- Robust retriever evaluation

- Multimodal grounding

- Continuous monitoring

Conclusion

Hallucination in clinical Large Language Models is not merely a training artifact—it is an emergent property of probabilistic language modeling under uncertainty.

Retrieval Augmented Generation (RAG) reduces hallucination by grounding outputs in external evidence, but its effectiveness depends on careful system design. Visual multimodal retrieval, entity probing diagnostics, retrieval optimization, and structured fine-tuning all contribute meaningfully to safer outputs.

For healthcare-grade AI Development, the objective is not to eliminate hallucination entirely—that remains unrealistic—but to systematically constrain, detect, and correct it.

The future of clinical LLMs will not be defined by scale alone, but by how rigorously their reasoning is anchored to evidence.

Showcasing Korea’s AI Innovation: Makebot’s HybridRAG Framework Presented at SIGIR 2025 in Italy. Read here!

As healthcare organizations move from experimental pilots to production-grade clinical AI Development, grounding, latency control, and hallucination mitigation become mission-critical. Makebot’s HybridRAG framework demonstrates how advanced RAG architectures—combining offline QA pre-generation with optimized semantic matching—can significantly improve accuracy, cost efficiency, and scalability in real-world deployments.

To explore how retrieval-grounded LLM systems can be engineered for high-stakes domains like healthcare, discover Makebot’s HybridRAG research and enterprise-ready architecture here:

👉 Start your AI transformation: www.makebot.ai

📩 Inquiries: b2b@makebot.ai

Why Generative AI Projects Fail and How to Achieve Scalable AI Success

.jpg)

.png)

_2.png)

.jpg)