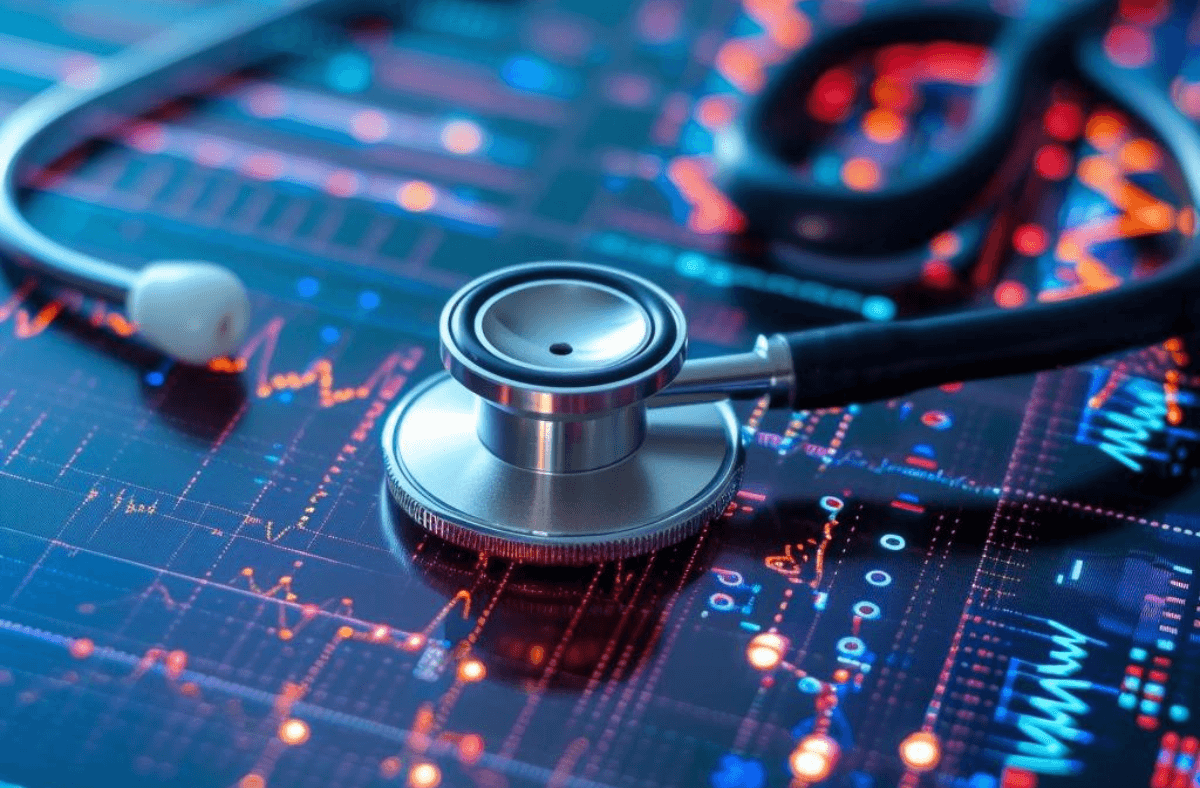

Why AI Fails to Scale in Healthcare—and How to Fix It

Healthcare AI stalls not from poor models, but from fragmented data, weak governance, and low trust.

Primary Keywords : AI in healthcare challenges , Generative AI , AI Chatbots , Large Language Model , healthcare AI scalability, why AI fails in healthcare , AI implementation in healthcare , scaling AI in healthcare , healthcare AI adoption barriers , AI transformation in healthcare , clinical AI deployment

Introduction

Healthcare is one of the most data-rich industries on the planet, and yet it remains one of the most difficult environments for AI to scale. Billions of dollars flow annually into AI implementation in healthcare—from diagnostic imaging to predictive readmission models—but the evidence consistently shows that most pilots stall, most deployments remain siloed, and most clinicians remain skeptical.

This isn't a failure of the technology itself. Generative AI and Large Language Models have demonstrated remarkable capability in controlled settings: summarizing clinical notes, generating differential diagnoses, flagging drug interactions. The failure is structural. Healthcare institutions carry decades of fragmented data architecture, rigid regulatory environments, and a deeply human set of trust requirements that algorithms cannot shortcut.

This article examines the real reasons AI in healthcare fails to reach scale—and maps out what organizations, policymakers, and AI developers must do differently to close the gap between promise and deployment.

.png)

This diagram shows that AI fails to scale in healthcare due to four compounding barriers—data fragmentation, regulatory and liability constraints, poor trust and workflow integration, and an institutional mindset that treats AI as a tech project rather than transformation—each layer narrowing the path from pilot to real-world deployment.

The Scalability Gap: Why AI Pilots Don't Become Programs

The pattern is distressingly familiar. A hospital system partners with an AI vendor, runs a successful 90-day pilot in one department, publishes a case study—and then the project quietly stalls. According to a 2023 McKinsey report, fewer than 20% of healthcare AI pilots successfully transition to full-scale clinical deployment.

The core problem is that scaling AI in healthcare requires far more than a generalizable model. It requires clean, standardized data pipelines; integration with Electronic Health Record (EHR) systems that were never designed for AI interoperability; and clinical validation across patient demographics that may look very different from the pilot cohort.

Most AI vendors optimize for accuracy within a narrow pilot scope. But healthcare data is notoriously heterogeneous. A model trained on patient records from a large academic medical center will perform differently—sometimes dangerously so—when deployed in a rural community hospital. This distribution shift problem is not a flaw unique to healthcare AI, but its consequences here are uniquely high-stakes.

The deeper issue is institutional. Clinical AI deployment requires buy-in not just from C-suite executives, but from the frontline clinicians who will actually use the tools. When AI recommendations conflict with clinical intuition—or when models can't explain their outputs in terms a clinician can interrogate—adoption stalls at the individual level, regardless of organizational mandate.

McKinsey: AI Could Save the Healthcare Industry $360 Billion Annually. Read more here!

The Data Problem Is Worse Than It Looks

When healthcare leaders cite "data quality" as a barrier to AI transformation in healthcare, they are often understating the problem. Healthcare data isn't just messy—it is structurally fragmented across incompatible systems, siloed by institutional and regulatory boundaries, and inconsistently encoded across different care settings.

Consider the state of EHR interoperability. Despite the HITECH Act and subsequent mandates for data standardization, most U.S. healthcare systems still cannot seamlessly exchange structured clinical data with external partners. HL7 FHIR standards have improved matters at the API level, but the majority of clinically relevant information—physician notes, radiology reads, discharge summaries—remains locked in unstructured text that requires substantial preprocessing before any AI model can use it.

This is precisely where Large Language Models enter the conversation with genuine promise. LLMs can process unstructured clinical text at scale, extracting diagnostic signals, summarizing visit histories, and flagging documentation gaps. But they also inherit the noise embedded in that text. Inconsistent clinical terminology, abbreviations that vary by institution, and documentation patterns shaped by billing incentives rather than clinical accuracy all degrade model performance in ways that are difficult to detect without domain expertise.

- EHR systems use incompatible data schemas, forcing costly ETL (extract, transform, load) pipelines before any AI layer can be applied.

- Patient records often contain conflicting entries, especially across care transitions, creating ground truth ambiguity during model training.

- Protected Health Information (PHI) regulations severely limit cross-institutional data sharing, constraining the diversity of training datasets.

The result is that data preparation—not model development—consumes the majority of time and cost in most healthcare AI projects. Organizations that don't invest in a robust data infrastructure before launching AI initiatives are essentially building on sand.

The Growing Role of AI Chatbots in Modern Healthcare Communication. Continue Reading Here!

Regulatory and Liability Barriers to Clinical AI Deployment

Regulatory uncertainty is one of the most underappreciated healthcare AI adoption barriers. The FDA's framework for AI-based Software as a Medical Device (SaMD) has matured significantly, but ambiguity remains—particularly for adaptive models that update their parameters post-deployment. Clinicians and hospital legal teams are acutely aware that if an AI-assisted decision contributes to a negative patient outcome, the liability landscape is murky at best.

This liability gap has a direct behavioral consequence: risk-averse healthcare organizations limit clinical AI deployment to low-stakes administrative workflows, while avoiding the high-value clinical decision support applications where AI could have the greatest impact.

Generative AI and AI chatbots face a particularly sharp version of this problem. When a Large Language Model generates a clinically plausible but factually incorrect medication recommendation—a phenomenon known as hallucination—the consequences are not abstract. Several high-profile incidents involving AI-generated medical misinformation have amplified institutional caution, even when the underlying model performance is statistically strong.

Regulatory bodies are actively working to address this. The FDA's predetermined change control plan framework, the EU AI Act's classification of healthcare AI as high-risk, and emerging ISO standards for clinical AI all represent progress. But for most healthcare organizations operating today, the compliance burden of deploying AI in healthcare at scale remains daunting—particularly for systems without dedicated AI governance infrastructure.

Stanford Develops Real-World Benchmarks for Healthcare AI Agents. More here!

The Trust and Workflow Integration Problem

Even when data is clean and regulatory hurdles are navigated, healthcare AI scalability frequently fails at the human layer. Clinicians are not passive recipients of AI recommendations—they are trained professionals with established cognitive workflows, strong professional identities, and legitimate skepticism toward systems that cannot explain their reasoning.

Research from Stanford and the Mayo Clinic has consistently shown that clinician acceptance of AI tools is strongly correlated with two factors: perceived accuracy and interpretability. A model with 92% accuracy that cannot explain its outputs may face more adoption resistance than a model with 85% accuracy that produces clear, auditable reasoning traces. This dynamic has significant implications for how AI implementation in healthcare should be designed.

Workflow integration compounds the trust problem. Most clinical AI tools are deployed as standalone applications or EHR plug-ins that require clinicians to navigate additional interface layers during already time-pressured workflows. Rather than reducing cognitive load, poorly integrated AI tools increase it—leading to alert fatigue, workaround behaviors, and eventual abandonment.

The organizations seeing the greatest success with scaling AI in healthcare have taken a fundamentally different approach:

- They co-design AI tools with frontline clinicians rather than deploying them top-down.

- They instrument AI outputs within existing EHR interfaces rather than creating parallel systems.

- They prioritize explainability, even when it marginally reduces model performance.

- They treat AI deployment as a change management initiative, not a software rollout.

This shift in framing—from technology project to organizational transformation—is the single most important variable separating successful AI transformation in healthcare from another failed pilot.

Dr. Hamad Husainy on AI in Emergency Medicine: Restoring Clinical Clarity in a Data-Saturated ED. Read here!

Where AI Is Actually Gaining Traction

It would be misleading to paint a picture of uniform failure. AI in healthcare is scaling successfully in specific domains, and the pattern of success is instructive.

Administrative automation is leading the way. AI chatbots and LLM-powered tools are being deployed effectively for prior authorization processing, appointment scheduling, patient intake documentation, and insurance coding. These applications share a common profile: lower clinical risk, clearer performance metrics, and faster feedback loops than diagnostic AI.

In medical imaging, AI has achieved genuine clinical acceptance. FDA-cleared algorithms for detecting diabetic retinopathy, pulmonary nodules, and stroke indicators are now used routinely in several major health systems. The success here reflects years of rigorous clinical validation, narrow task scope, and workflows where AI augments rather than replaces radiologist judgment.

Generative AI is making measurable inroads in ambient clinical documentation—where LLMs transcribe and structure physician-patient conversations in real time, reducing documentation burden without inserting AI into the diagnostic reasoning chain. Tools like Nuance DAX and Suki AI have reported significant reductions in documentation time, with high clinician satisfaction scores. This represents a pragmatic deployment pattern: using AI chatbots and LLMs where the value is clear and the liability exposure is manageable.

The pattern is clear: healthcare AI adoption scales fastest where clinical risk is bounded, data is structured, and workflow disruption is minimal.

.png)

The diagram presents a five-pillar framework showing that scaling AI in healthcare requires building strong data foundations, starting with low-risk use cases, and reinforcing deployment with governance, explainability, and proactive regulatory engagement to achieve sustainable, real-world impact.

How to Fix It: A Framework for Scalable Healthcare AI

Fixing why AI fails in healthcare requires addressing structural barriers, not just improving models. Based on patterns from successful deployments, a pragmatic framework emerges.

Build the data foundation first. Before deploying any AI initiative, organizations must invest in data governance, EHR standardization, and interoperability infrastructure. This is unglamorous work, but it is foundational. Without it, even excellent models will underperform.

Start with high-certainty, low-risk applications. Prioritizing AI deployment in administrative workflows, ambient documentation, and structured imaging tasks builds institutional confidence, generates measurable ROI, and creates the change management muscle needed for more complex clinical applications.

Embed AI governance into the deployment process. Every clinical AI deployment should have a designated clinical owner, defined performance monitoring protocols, and a clear escalation path when model behavior degrades or clinical outcomes diverge from expectations. Governance is not a compliance checkbox—it is what separates sustainable deployment from a pilot that slowly fades.

Invest in explainability, not just accuracy. For AI implementation in healthcare to earn clinician trust, the reasoning behind AI recommendations must be auditable. This may mean accepting models with slightly lower statistical performance in exchange for interpretable outputs that clinicians can verify and override.

Treat regulatory engagement as a strategic asset. Organizations that develop proactive relationships with regulators—participating in FDA pre-submission programs, contributing to standards development—gain a competitive advantage in navigating the compliance landscape for scaling AI in healthcare.

The organizations that will lead AI transformation in healthcare over the next decade are not those with the most sophisticated models. They are those building the institutional infrastructure—data, governance, culture, and clinical trust—that allows AI to operate reliably at scale.

Showcasing Korea’s AI Innovation: Makebot’s HybridRAG Framework Presented at SIGIR 2025 in Italy. Read here!

References

- McKinsey & Company — Transforming healthcare with AI (2023): https://www.mckinsey.com/industries/healthcare/our-insights/transforming-healthcare-with-ai

- Deloitte Insights — AI in healthcare: The future of patient care and health management: https://www2.deloitte.com/us/en/insights/industry/health-care/artificial-intelligence-in-health-care.html

- FDA — Artificial Intelligence and Machine Learning in Software as a Medical Device: https://www.fda.gov/medical-devices/software-medical-device-samd/artificial-intelligence-and-machine-learning-software-medical-device

- NEJM Catalyst — Artificial Intelligence in Health Care: Anticipating Challenges to Ethics, Privacy, and Bias: https://catalyst.nejm.org/doi/full/10.1056/CAT.19.0404

- Stanford HAI — AI in Health and Medicine (2022 AI Index Report): https://hai.stanford.edu/research/ai-index-2022

- JAMA Network — Key Challenges for Delivering Clinical Impact with Artificial Intelligence: https://jamanetwork.com/journals/jamainternalmedicine/fullarticle/2792088

- World Health Organization — Ethics and governance of artificial intelligence for health: https://www.who.int/publications/i/item/9789240029200

- Nuance DAX Ambient Clinical Intelligence: https://www.nuance.com/healthcare/ambient-clinical-intelligence.html

.jpg)

.png)

_2.png)

.jpg)