How LLMs Are Embedded into Modern Marketing Automation Platforms

LLMs transform marketing automation into context-aware orchestration systems.

.jpg)

Marketing automation is no longer just about scheduling emails and triggering workflows. In the Modern Market, automation platforms are evolving into intelligent orchestration layers—systems that don’t simply execute predefined rules, but dynamically interpret data, generate content, and adapt strategies in real time.

At the center of this transformation are LLM systems—Large Language Models—embedded deeply within CRM stacks, content engines, customer engagement platforms, and analytics dashboards. Rather than operating as standalone chat interfaces, these models are increasingly becoming a cognitive layer inside marketing automation architectures.

This article explores how that embedding works—technically, operationally, and strategically—and what it means for organizations deploying AI in marketing at scale.

Open-Source vs Closed-Source LLMs: Why the Strategic Divide Matters More This Year. Read more here!

Glossary of Technical Key Terms

Retrieval-Augmented Generation (RAG). A hybrid architecture that combines vector-based document retrieval with generative LLM outputs to ground responses in enterprise data and reduce hallucination risk.

Vector Database. A specialized database that stores embeddings (numerical representations of text) to enable fast semantic search and similarity matching in LLM-powered systems.

Agentic Workflow. An AI orchestration approach where an LLM acts as a reasoning engine that autonomously selects tools, triggers APIs, and executes multi-step tasks based on defined goals.

Fine-Tuning. The process of training a pre-trained LLM on domain-specific data (e.g., brand guidelines, CRM history, compliance rules) to improve accuracy, tone alignment, and contextual relevance.

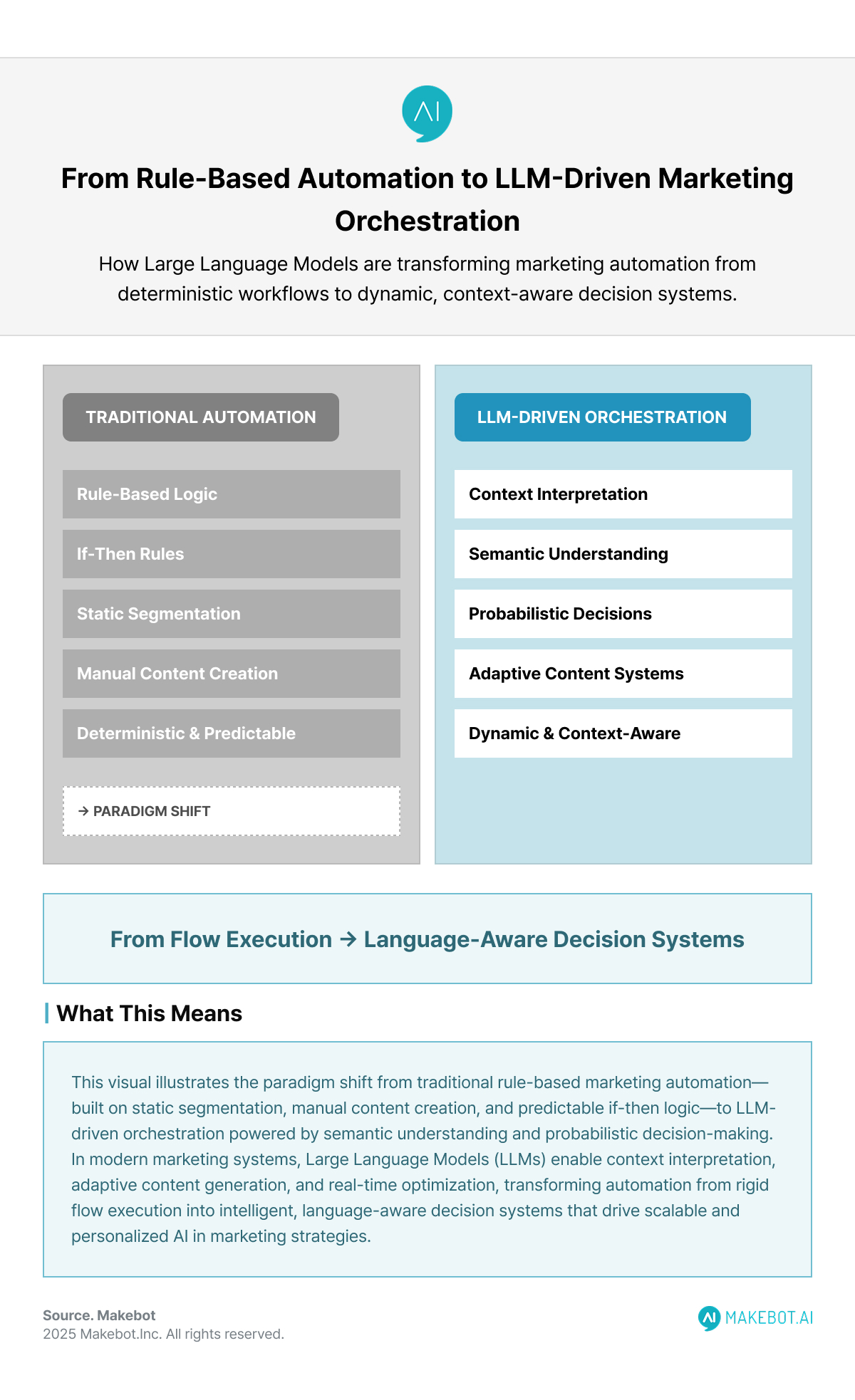

From Rule-Based Automation to Language-Driven Orchestration

Traditional marketing automation platforms were built around deterministic logic:

- If the user opens email → send follow-up.

- If lead score > threshold → notify sales.

- If cart abandoned → trigger reminder sequence.

These systems relied on:

- Static segmentation

- Manual content creation

- Rule-based scoring

- Fixed approval workflows

The architecture was predictable but limited. It lacked semantic understanding and adaptability.

With Large Language Models, the paradigm shifts from rule execution to context interpretation. Instead of asking:

“Did the user click link A?”

Platforms now ask:

“What does the customer’s recent behavior imply about intent, sentiment, and next-best action?”

This shift transforms automation from flow-based logic to probabilistic, language-aware decision systems.

.png)

Architectural Patterns: How LLMs Are Embedded

Modern platforms embed LLM capabilities using three primary architectural models:

A. API-Augmented SaaS Layer

In this model, the automation platform calls an external LLM API (e.g., via OpenAI or proprietary model providers).

Architecture flow:

- CRM data →

- Prompt construction layer →

- LLM API call →

- Structured output parsing →

- Action engine execution

This is common in CRM vendors embedding generative assistants into existing UI layers.

Example: CRM-based email drafting, lead summary generation, and call transcript analysis.

Advantages:

- Fast deployment

- Scalable compute

- Lower infrastructure burden

Trade-offs:

- Data governance concerns

- Token cost scaling

- Latency constraints

- Limited customization without fine-tuning

B. Retrieval-Augmented Generation (RAG) Integration

Enterprise-grade systems increasingly use RAG pipelines:

- Customer data stored in CRM or CDP

- Embeddings generated and stored in a vector database

- Real-time retrieval of relevant context

- Context injected into LLM prompt

- LLM generates grounded response

This reduces hallucination risk and improves personalization quality.

In retail marketing automation, this enables:

- Real-time inventory-aware responses

- Policy-compliant messaging

- Contextual upsell recommendations

Why this matters:

Pure generative output is risky in customer-facing automation. RAG adds factual grounding and compliance alignment.

C. Agentic Workflow Orchestration

The most advanced embedding involves agent-based systems.

Instead of generating isolated text outputs, the LLM acts as a reasoning engine that:

- Decides which API to call

- Queries structured databases

- Initiates follow-up campaigns

- Triggers multi-step automation flows

Modern distributed architectures now integrate:

- LLMs

- Vector databases

- Event streams (Kafka-style pipelines)

- Workflow orchestration engines

This mirrors the historical evolution from monolithic systems to distributed microservices—now with generative AI as a cognitive service layer.

10 Key LLM Market Trends for 2026. More here!

Embedded Use Cases Across the Marketing Stack

Content Automation Engines

One of the earliest integrations was content generation:

- Product descriptions

- Email sequences

- Ad copy

- Blog drafts

- Meta descriptions

- Localization variants

But modern implementations go beyond draft generation.

Platforms now:

- Analyze competitor gaps

- Predict content performance

- Suggest topic clusters

- Auto-generate metadata

Enterprise implementations report measurable time savings in metadata tagging and asset discoverability.

The key evolution: content automation becomes content intelligence.

CRM & Sales Automation

Embedded LLM systems in CRM environments can:

- Draft personalized outreach emails

- Summarize sales calls

- Suggest next-best actions

- Generate pipeline forecasts

Salesforce’s Einstein GPT and similar tools integrate generative capabilities directly inside CRM records.

This is no longer a standalone chatbot—it’s contextual AI operating within structured enterprise data.

Customer Support & Conversational Flows

Customer service automation sees a particularly strong impact.

BCG estimates generative AI can increase service productivity by 30–50%.

Embedded LLMs:

- Handle tier-1 queries

- Summarize tickets

- Draft human-reviewed responses

- Analyze sentiment across transcripts

In advanced setups:

- The automation platform dynamically routes tickets based on semantic analysis.

- Escalation thresholds are determined by tone and complexity, not keyword flags.

Content Operations & DAM Systems

Enterprise content management systems embed Large Language Models for:

- Automated metadata tagging

- Asset enrichment

- Content gap analysis

- Workflow optimization

Predictive analytics helps teams prioritize content creation based on forecasted demand.

According to McKinsey research cited in enterprise AI adoption discussions, 92% of companies plan to increase AI investments, with content operations among the highest-impact areas.

This reflects a structural shift: automation platforms are becoming knowledge systems.

Governance, Compliance, and Brand Voice Control

Embedding AI in marketing is not purely a technical challenge—it is a governance challenge.

Major risks include:

- Hallucination

- Brand drift

- Regulatory violations

- Bias in personalization logic

Enterprise solutions mitigate risk through:

- Fine-tuning on brand-specific corpora

- Vocabulary controls and phrase blocking

- Human-in-the-loop approval workflows

- Audit trails and logging

The takeaway: embedding LLMs requires embedding control layers alongside them.

Performance & ROI Considerations

The real question in the Modern Market isn’t “Can we use LLMs?”

It’s “What measurable leverage do they create?”

Documented outcomes across use cases include:

- Time-to-publish reductions (e.g., 70% in product content workflows)

- Double-digit improvements in product page conversion

- Increased sales ROI (10–20% in AI-assisted sales environments)

But ROI depends on:

- Data quality

- Integration maturity

- Prompt engineering discipline

- Continuous monitoring

- Alignment with business KPIs

Organizations that treat LLM embedding as a surface-level feature often see short-term gains but fail to scale impact.

Strategic Trade-Offs in Embedding LLM Capabilities

Embedding LLM capabilities into marketing automation platforms requires navigating several strategic trade-offs. One of the primary decisions involves choosing between cloud-based API models and on-premise LLM deployments, where organizations must balance scalability and rapid deployment against data sovereignty and tighter control over sensitive information.

Another critical consideration is whether to rely on fine-tuned models or advanced prompt engineering; fine-tuning can deliver higher accuracy and stronger brand alignment but comes with increased training costs and infrastructure demands. Companies must also determine the appropriate depth of automation—greater automation improves operational efficiency but increases exposure to compliance, reputational, and operational risks.

Finally, adopting fully autonomous agents can significantly accelerate execution and decision-making, yet this speed introduces higher governance complexity, requiring robust monitoring, oversight mechanisms, and failure-handling systems. Together, these trade-offs define the strategic architecture choices organizations must make when embedding LLMs into enterprise marketing environments.

The Strategic Implications for AI Marketing Tools

The next generation of AI Marketing Tools will not present themselves as “AI features.”

They will function as:

- Autonomous optimization layers

- Semantic decision engines

- Cross-channel personalization orchestrators

- Predictive content strategists

The automation layer becomes adaptive, not reactive.

And critically: Human marketers do not disappear. Instead, their role shifts upward—to governance, strategy, experimentation design, and system oversight.

Conclusion

Embedding Large Language Models into marketing automation platforms represents a structural shift in enterprise software architecture.

We are moving from:

- Static workflows

to - Dynamic, context-aware orchestration systems.

From:

- Manual segmentation

to - Probabilistic personalization engines.

From:

- Content production pipelines

to - Intelligence-driven content ecosystems.

In the Modern Market, AI in marketing is no longer experimental. It is infrastructural.

The organizations that approach LLM embedding as a disciplined systems integration challenge—rather than a superficial content tool—will unlock compounding strategic advantage.

Because once automation can understand language, interpret intent, and reason over data, it stops being a tool.

It becomes a co-architect of growth.

Showcasing Korea’s AI Innovation: Makebot’s HybridRAG Framework Presented at SIGIR 2025 in Italy. Read here!

As marketing automation platforms evolve into intelligent orchestration systems, grounding LLM outputs in structured enterprise knowledge becomes mission-critical. Makebot’s HybridRAG framework demonstrates how retrieval-aware architectures can dramatically improve latency, accuracy, and scalability in real-world deployments.

If your organization is embedding LLM capabilities into CRM, content operations, or automation workflows, explore how Makebot’s HybridRAG infrastructure can help you build production-grade, governance-ready AI systems that deliver measurable business impact.

Learn more about Makebot’s AI innovations and enterprise-ready LLM frameworks here:

👉 Start your AI transformation: www.makebot.ai

📩 Inquiries: b2b@makebot.ai

Why Generative AI Projects Fail and How to Achieve Scalable AI Success

.png)

_2.png)

.jpg)