How Retrieval Augmented Generation Improves Product Recommendation Accuracy in E-Commerce

RAG improves e-commerce accuracy by grounding recommendations in real-time data.

The modern E-commerce Platform is no longer competing on inventory alone—it competes on intelligence. Customers expect search, support, and recommendations to understand intent instantly and respond with precision.

Traditional collaborative filtering and static rule-based engines still power many recommendation systems. But they struggle with real-time inventory changes, complex product queries, and contextual nuance.

This is where RAG—short for Retrieval Augmented Generation—fundamentally changes the architecture of AI in Ecommerce.

Rather than relying solely on historical training data, RAG systems retrieve real-time, domain-specific information before generating responses. In product discovery workflows, this retrieval layer dramatically improves factual accuracy, personalization depth, and recommendation relevance.

Solving Cart Abandonment with Smart RAG Chatbots. Read more here!

Glossary of Key Terms:

RAG (Retrieval Augmented Generation). An AI technique that retrieves real-time, authoritative information from external sources to ground generative outputs and improve accuracy.

AI Shopping Assistant. An AI system that interacts with customers to provide personalized product recommendations, answer queries, and guide purchasing decisions.

Knowledge Base. A structured repository of product information, reviews, specifications, inventory data, and policies that the RAG system queries to generate accurate recommendations.

Vector Database. A specialized database that stores numerical embeddings of documents or products, enabling semantic similarity search for retrieval in AI systems.

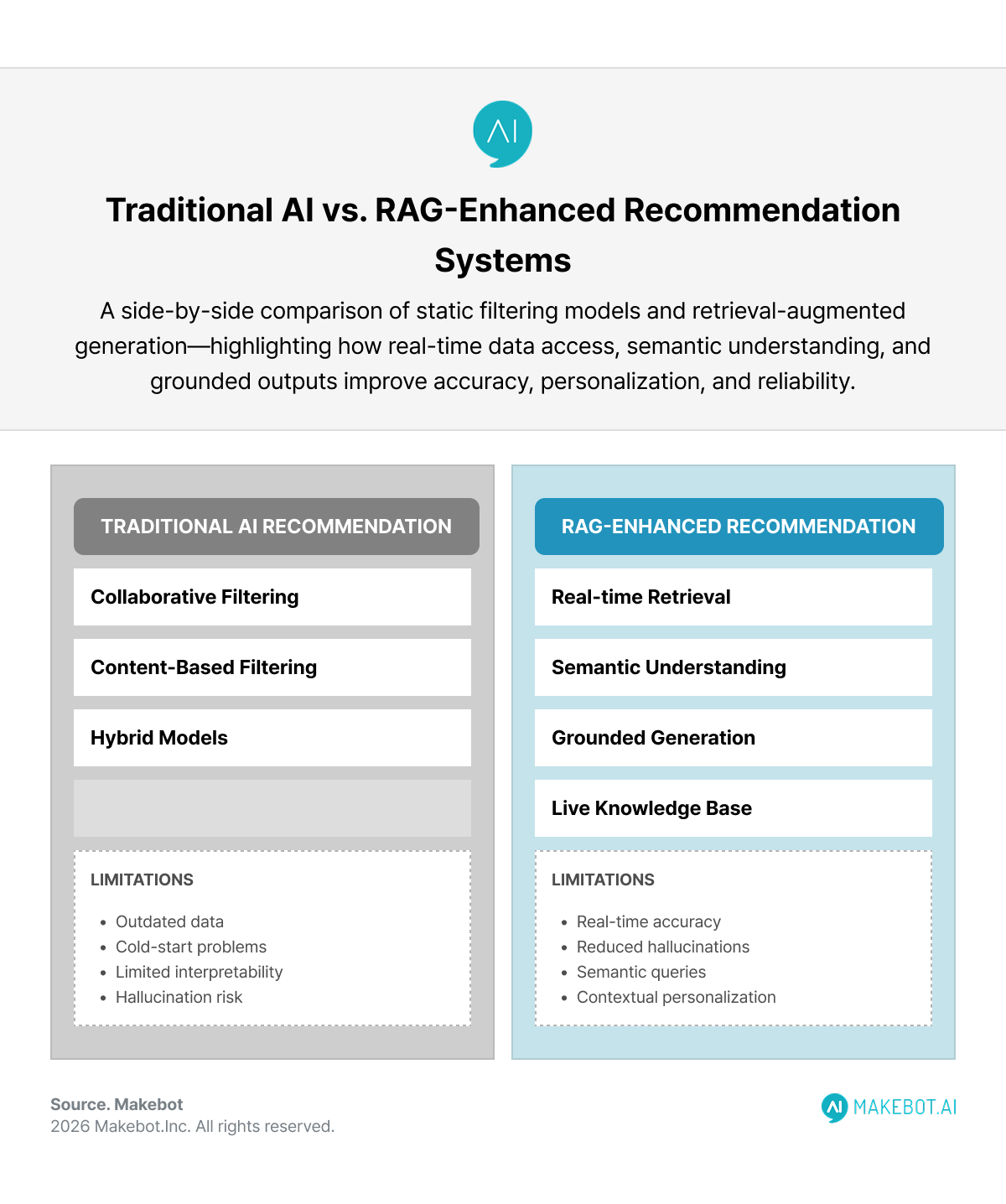

The Structural Problem with Traditional AI Product Recommendation

Classic AI Product Recommendation systems rely on three main approaches:

- Collaborative filtering (users who bought X also bought Y)

- Content-based filtering (matching product attributes)

- Hybrid models combining both

These models perform well in stable catalogs with structured metadata. However, they encounter systemic limitations:

- Outdated product descriptions or pricing

- Cold-start problems for new SKUs

- Limited interpretability for vague queries

- Inability to synthesize unstructured reviews, FAQs, or policy documents

- Hallucination risk in generative assistants

A standard LLM-based assistant without retrieval compounds the problem. It may generate plausible—but inaccurate—recommendations based on stale training data.

RAG addresses these gaps by grounding generative output in live, authoritative sources.

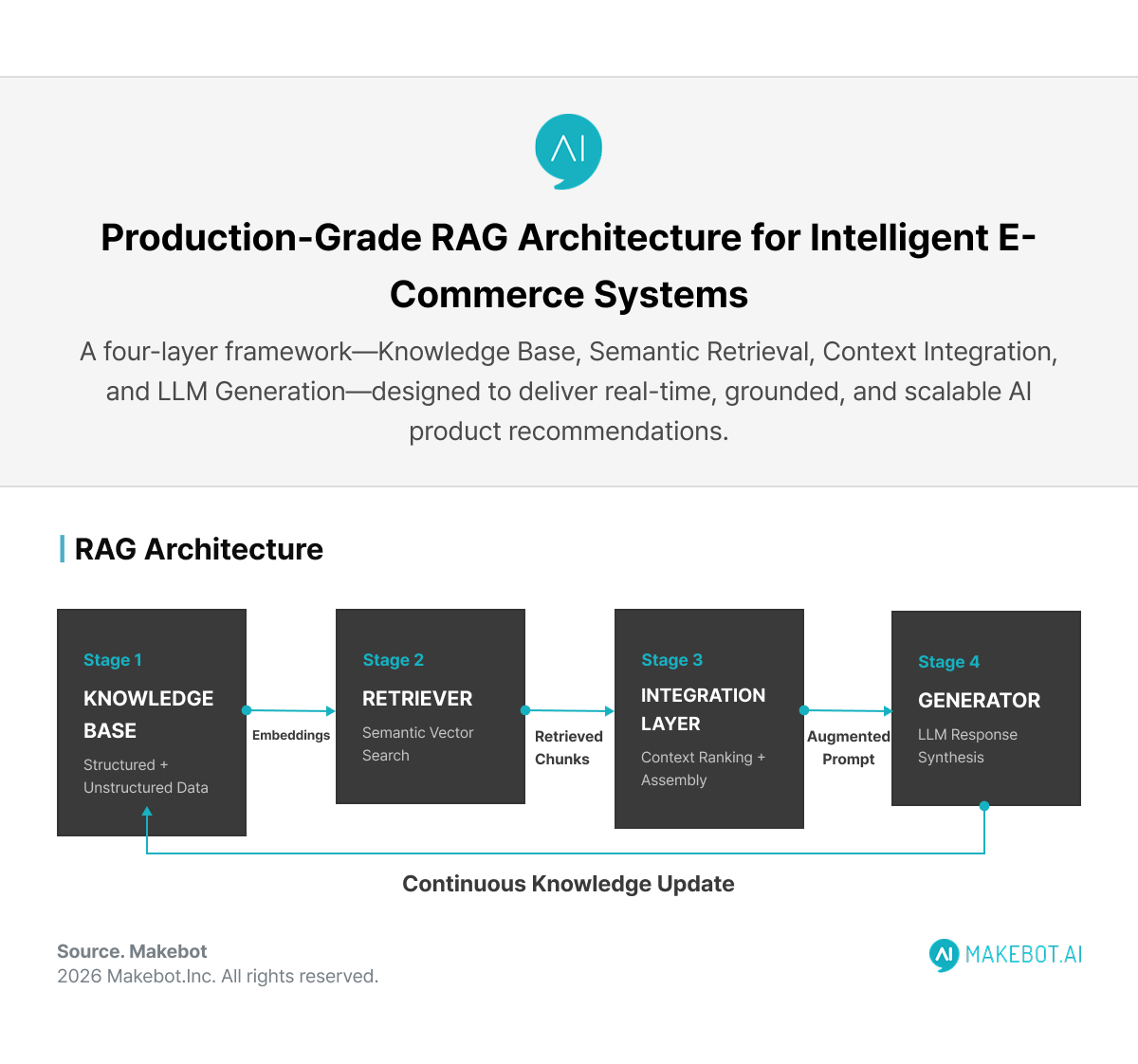

How RAG in E-commerce Works at the System Level

A production-grade RAG in E-commerce architecture typically includes four core components:

1. Knowledge Base

Product catalogs, reviews, spec sheets, inventory databases, policies, user profiles, and behavioral logs are chunked and embedded into vector representations.

2. Retriever

User queries are converted into embeddings and matched semantically against the vector database (e.g., FAISS, Pinecone, Weaviate).

3. Integration Layer

The retrieved documents are reranked, filtered, and assembled into a context window optimized for the generator.

4. Generator (LLM)

The language model produces a grounded response using retrieved product information.

Instead of guessing which “waterproof hiking boots under $200” are relevant, the system retrieves:

- Updated product availability

- Waterproof ratings

- Terrain suitability from product specs

- Verified customer reviews

- Current pricing

Then generates a contextual recommendation.

This hybrid retrieval-generation loop reduces hallucination risk while preserving natural-language reasoning.

How RAG Unlocks the Power of Enterprise Data. More here!

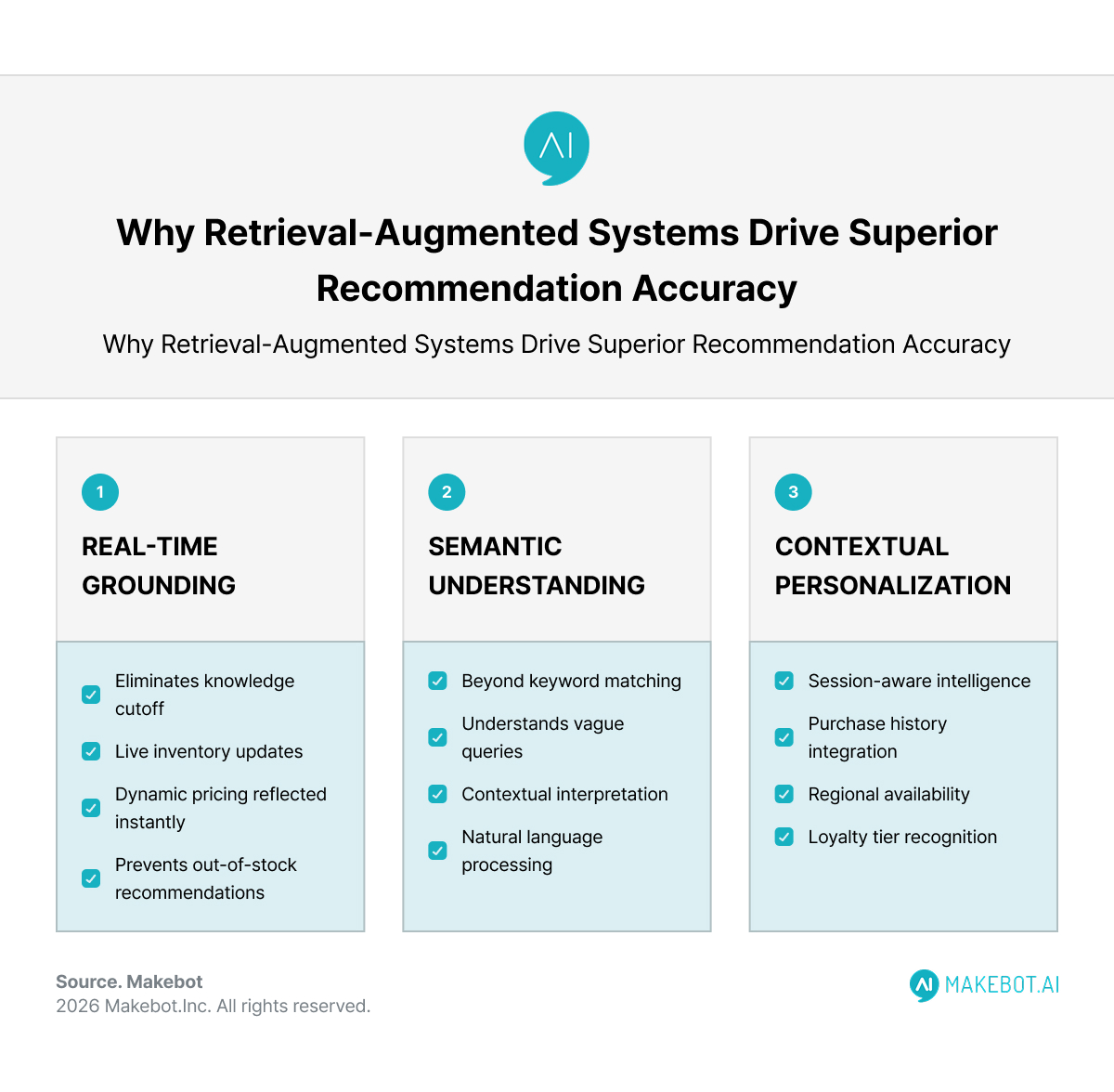

Why Retrieval Improves Recommendation Accuracy

1. Real-Time Grounding

Static LLMs operate within a knowledge cutoff. RAG eliminates this constraint.

Inventory updates, dynamic pricing, flash sales, and SKU changes are reflected instantly because the retriever queries live databases.

This prevents one of the most damaging e-commerce failures: recommending products that are out of stock.

2. Semantic Understanding of Vague Queries

Traditional search engines rely on keyword matching. RAG systems rely on semantic similarity.

If a user types: “I need a travel-friendly laptop with long battery life for remote work.”

A pure filtering system might struggle.

A RAG-enabled AI Shopping Assistant retrieves documents containing:

- Battery duration benchmarks

- Weight specifications

- Portability reviews

- Remote productivity comparisons

The generator synthesizes them into a coherent recommendation, improving conversion probability.

3. Contextual Personalization

Personalization has evolved from historical behavior to session-aware intelligence.

RAG enables dynamic context injection, such as:

- Current browsing session

- Purchase history

- Warranty data

- Regional availability

- Loyalty tier

Instead of retraining the model, businesses update the knowledge base.

This decoupling significantly reduces computational cost compared to fine-tuning.

Quantifying the Market Shift

The global RAG market was valued at approximately $1.3 billion in 2024 and is projected to reach $74.5 billion by 2034, reflecting ~49.9% CAGR.

This growth is not speculative—it reflects enterprise demand for:

- Reduced hallucination risk

- Cost-efficient AI scaling

- Real-time domain knowledge integration

- Trustworthy AI outputs

For e-commerce operators, the ROI manifests in measurable KPIs:

- Higher conversion rates

- Increased average order value

- Improved query resolution rates

- Reduced support costs

- Lower product return rates

Advanced RAG Techniques for E-Commerce

Not all RAG systems are equal.

Hybrid Search (Keyword + Vector)

Combining BM25 keyword retrieval with semantic vector search improves recall precision in product catalogs with structured attributes.

Reranking & Contextual Compression

Reducing irrelevant passages before prompt injection prevents context-window overload.

Multi-hop Retrieval

For complex questions such as:

“Which laptop under $1500 has the best battery life and supports Adobe Premiere?”

The system may first retrieve product candidates, then retrieve performance benchmarks, then synthesize.

Graph-Enhanced Retrieval

Shopping graphs connect products through attribute relationships (e.g., waterproof → Gore-Tex → hiking category).

This enables deeper recommendation reasoning.

Trade-Offs and Real-World Constraints

While RAG in E-commerce improves accuracy, it introduces complexity.

Infrastructure Costs

RAG requires:

- Vector databases

- Embedding pipelines

- Orchestration layers

- LLM inference endpoints

Mitigation strategies:

- Caching high-frequency queries

- Hybrid search instead of vector-only search

- Threshold-based LLM invocation

Latency

Retrieval + generation increases response time. Optimized architectures use:

- Pre-indexed embeddings

- Efficient ANN search

- Streaming LLM responses

Hallucination Risk Persists

Although RAG reduces hallucinations, it does not eliminate them. Corrective RAG pipelines include:

- Post-generation validation

- Source citation

- Confidence scoring

Beyond Search: Expanding Use Cases

Customer Support

An AI Shopping Assistant can retrieve warranty policies, order tracking details, and return eligibility in real time.

NLP-to-SQL Retrieval

RAG can translate natural language into structured product database queries.

Multimodal Recommendation

Future systems combine RAG with image embeddings:

- Upload a picture → retrieve similar products → generate recommendations

Invoice & Document Extraction

Enterprise e-commerce operations use RAG pipelines to process invoices and summarize structured data.

Strategic Implications for AI in Ecommerce

RAG does more than enhance recommendations—it redefines search economics.

In a zero-click future where AI assistants generate direct answers, product discovery shifts from page ranking to contextual relevance.

The competitive advantage moves toward:

- Better knowledge base structuring

- Superior retrieval tuning

- Cleaner data pipelines

- Controlled governance

Organizations that treat RAG as a search augmentation tool will fall behind those who treat it as an architecture shift.

Conclusion: Why RAG Is the New Recommendation Engine

The evolution of AI Product Recommendation is no longer about predicting “what others bought.”

It is about retrieving what is relevant right now—and generating an answer that is factually grounded, personalized, and actionable.

RAG enables this by:

- Anchoring generation in real-time knowledge

- Decoupling model intelligence from content updates

- Reducing hallucinations

- Improving trust

As customer expectations evolve toward conversational commerce, the integration of Retrieval Augmented Generation into every modern E-commerce Platform will transition from competitive edge to operational necessity.

The future of AI in Ecommerce belongs to systems that retrieve first—and generate second.

From Architecture to Deployment: Build Production-Grade RAG with Makebot

High-quality product data pipelines

Hybrid retrieval optimized for accuracy and speed

Workflow-embedded generative systems

The next generation of RAG in E-commerce will not rely on generic chatbot wrappers. It will depend on scalable, cost-efficient architectures capable of handling unstructured product catalogs, reviews, policy documents, and dynamic inventory systems.

Showcasing Korea’s AI Innovation: Makebot’s HybridRAG Framework Presented at SIGIR 2025 in Italy. Read here!

Makebot’s HybridRAG framework—presented at SIGIR 2025—demonstrates how offline QA pre-generation combined with semantic retrieval significantly improves accuracy while reducing runtime costs.

For retailers and marketplace operators building advanced AI Shopping Assistant systems, this means:

- Faster product discovery with reduced hallucination risk

- Context-aware recommendations grounded in live data

- Cost-optimized large-scale retrieval pipelines

- Enterprise-ready deployment with governance and explainability

Considering how to implement scalable, high-precision AI Product Recommendation systems using Retrieval Augmented Generation?

👉 Start your AI transformation: www.makebot.ai

📩 Inquiries: b2b@makebot.ai

Why Generative AI Projects Fail and How to Achieve Scalable AI Success

.jpg)

.png)

_2.png)

.jpg)