LLM Optimization for B2B Marketing: Architecture, RAG Pipelines, and AI Strategies for Enterprise Growth

How LLM architecture and RAG systems reshape B2B marketing into scalable growth engines

Artificial intelligence is reshaping how organizations generate demand, nurture leads, and close complex enterprise deals. In particular, Large Language Models (LLM) have evolved from experimental productivity tools into core infrastructure for digital growth engines. For B2B organizations with long sales cycles, multi-stakeholder buying committees, and data-heavy decision processes, the strategic application of LLM Optimization can dramatically increase marketing efficiency, pipeline generation, and revenue predictability.

Yet the conversation around AI in marketing often remains superficial—focused on content automation rather than the deeper architectural and strategic shifts required to unlock AI for B2B Growth. In practice, successful deployments require careful integration across data systems, CRM platforms, analytics pipelines, and knowledge bases.

This article explores how organizations can design a robust LLM Optimization strategy—combining system architecture, data engineering, and AI-driven marketing operations—to transform AI in B2B marketing into a scalable growth engine.

Glossary of Technical Key Terms

- Large Language Models (LLMs) – AI systems trained on massive text datasets that can understand, generate, and analyze natural language to perform tasks such as content generation, summarization, and decision support.

- Retrieval-Augmented Generation (RAG) – An AI architecture that combines large language models with external data retrieval systems so the model can access up-to-date or domain-specific information before generating responses.

- Vector Embeddings – Mathematical representations of text or data that convert words and documents into numerical vectors, allowing AI systems to perform semantic search and similarity matching.

- Parametric Knowledge – Information stored within an AI model’s internal parameters during training, enabling it to generate responses based on learned patterns without accessing external databases.

- Agentic AI – A type of AI system designed to autonomously perform multi-step tasks, make decisions, and execute workflows with minimal human intervention.

Can LLMs Work Without RAG? Read more here!

The Structural Shift: Why LLMs Matter in B2B Marketing

The B2B buying journey has undergone a fundamental transformation. Buyers increasingly research solutions using AI-driven interfaces rather than traditional search engines, meaning brand discovery now happens inside generative systems.

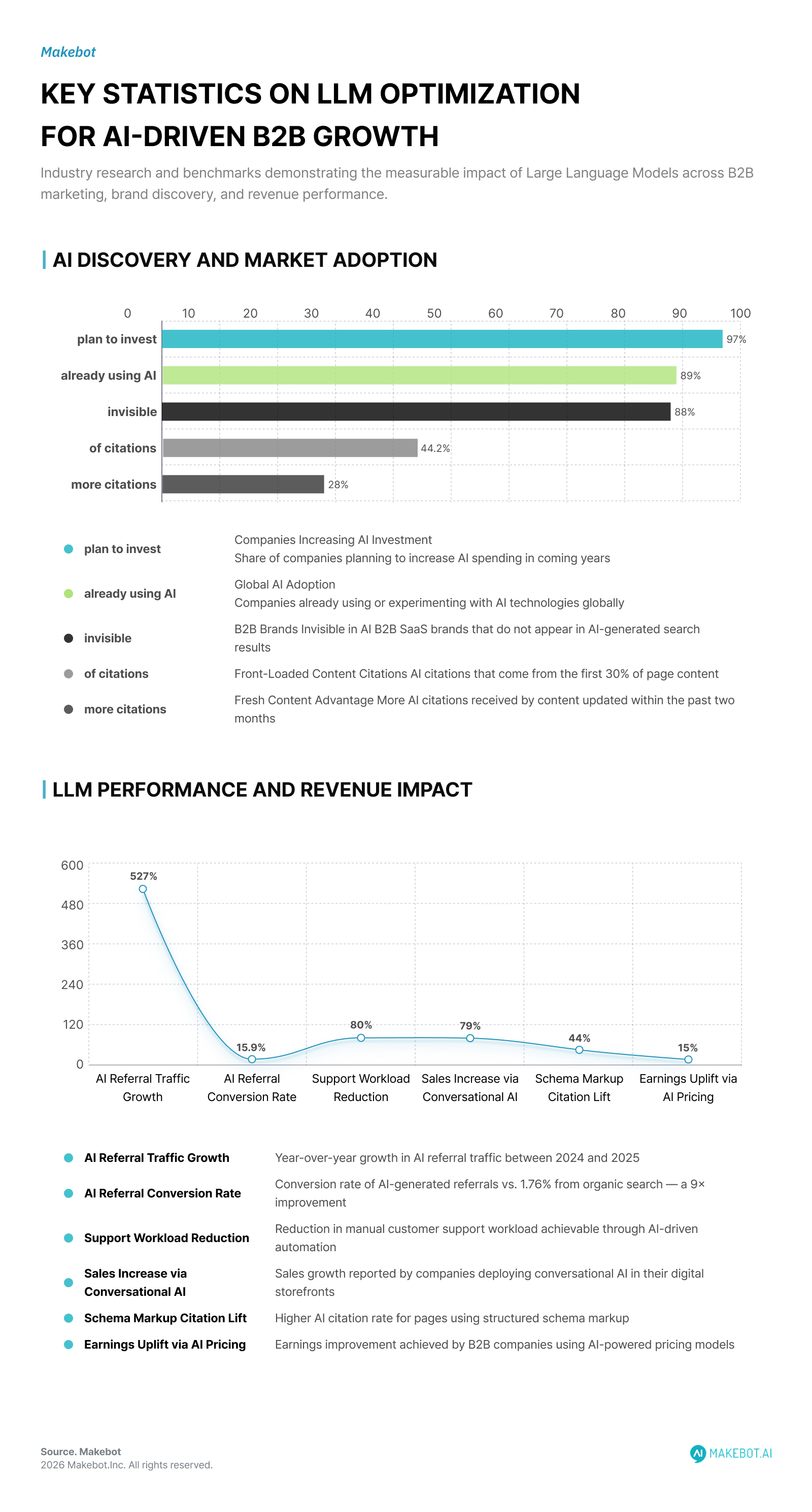

Recent industry data highlights the magnitude of this shift:

- AI referral traffic grew 527% year-over-year between 2024 and 2025.

- ChatGPT alone processes 2.5 billion prompts daily from 883 million monthly users.

- AI-generated referrals convert at 15.9% compared to 1.76% from traditional organic search, a 9× improvement in conversion efficiency.

- Yet only 12% of B2B SaaS brands appear in AI-generated search results, leaving 88% invisible during early vendor evaluation.

This shift fundamentally changes marketing dynamics. Instead of ranking among ten blue links, brands must now compete for inclusion within AI-generated shortlists of vendors or solutions.

In this environment, LLM Optimization becomes analogous to the role traditional SEO played in the early 2000s—except with deeper implications for brand authority, knowledge representation, and data accessibility.

The Architecture of AI-Driven B2B Marketing

A robust AI in B2B marketing strategy begins with architecture rather than tools. Organizations that treat AI merely as a content generator rarely see measurable growth.

Instead, effective LLM-driven marketing systems typically follow a multi-layered architecture:

1. Data Layer

The foundation consists of structured and unstructured data sources, including:

- CRM datasets (accounts, contacts, pipeline stages)

- Behavioral analytics (website activity, product usage)

- Market intelligence (industry reports, financial filings)

- Customer interaction logs (emails, support tickets, transcripts)

These datasets provide the contextual signals that LLM systems require to generate meaningful insights.

2. Retrieval-Augmented Generation (RAG)

Modern Large Language Models rarely operate in isolation. Instead, enterprise systems rely on Retrieval-Augmented Generation (RAG) pipelines that dynamically query external data.

The architecture typically includes:

- Vector embeddings for semantic search

- Knowledge bases containing product and industry documentation

- API connectors to CRM and analytics tools

- Context injection pipelines

This approach allows LLMs to synthesize insights from multiple data sources while minimizing hallucinations. Notably, 60% of AI responses rely on parametric knowledge learned during training, while 40% rely on real-time retrieval through RAG pipelines. This hybrid architecture enables AI to combine historical knowledge with real-time business intelligence.

3. AI Decision Layer

Once contextual data is retrieved, LLMs can power multiple decision-making functions across the marketing funnel:

- Next-best-action recommendations for sales teams

- Dynamic segmentation of high-value prospects

- Automated RFP responses and proposal generation

- Personalized email outreach campaigns

For example, one industrial distributor used AI to analyze construction permits and identify new opportunities—creating $1 billion in new pipeline opportunities within a single year.

4. Activation Layer

Insights generated by Large Language Models must ultimately trigger actions.

Common integrations include:

- Marketing automation platforms (HubSpot, Marketo)

- CRM workflows (Salesforce, Dynamics)

- Advertising platforms (LinkedIn Ads, Google Ads)

- Customer success tools

This orchestration layer ensures AI outputs directly influence pipeline generation rather than remaining analytical artifacts.

How LLMs Are Embedded into Modern Marketing Automation Platforms. Read here!

Core Use Cases Driving AI for B2B Growth

While LLM Optimization can support dozens of workflows, several use cases consistently deliver measurable ROI.

1. AI-Powered Opportunity Discovery

Traditional prospecting often relies on static lead lists or manual research. By contrast, LLM systems can analyze unstructured data sources such as:

- regulatory filings

- earnings transcripts

- public procurement databases

- social media discussions

These models identify emerging opportunities and recommend the next-best opportunity for sales teams. This capability dramatically reduces research time while improving targeting precision.

2. Intelligent Lead Nurturing

Personalization has long been a challenge in AI in B2B marketing due to limited data and manual segmentation. However, Large Language Models can dynamically personalize communication by analyzing:

- firmographic data

- previous interactions

- buyer intent signals

This enables automated yet context-aware outreach at scale. Organizations deploying such systems have reported 15% reductions in sales cycle length through automated lead scoring and follow-ups.

3. Sales Enablement and Meeting Preparation

B2B sales teams spend significant time preparing for meetings by aggregating information from multiple sources. LLMs can automate this process by generating meeting intelligence summaries that combine:

- customer financials

- past interactions

- competitor insights

- product recommendations

In one deployment, AI-generated meeting briefs integrated 20 data sources, freeing over 10% of sellers’ time for customer engagement.

4. AI-Assisted Proposal Generation

Responding to enterprise RFPs is notoriously time-intensive. Generative AI systems trained on historical proposals can:

- draft responses

- extract relevant documentation

- benchmark competitors

A healthcare organization implementing such a system reduced competitor analysis time by 60–80%, significantly accelerating proposal development.

5. Smart Pricing Optimization

Pricing decisions are often influenced by incomplete market intelligence. AI-driven pricing models can analyze hundreds of variables including:

- deal size

- industry segment

- negotiation history

- product configuration

One B2B services company deploying AI-powered pricing models achieved a 10%-15% uplift in earnings by reducing unnecessary discount variance.

LLM Optimization: The New SEO for AI Discovery

Beyond operational automation, LLM Optimization is increasingly critical for brand visibility.

When buyers ask AI systems for recommendations—such as “best cybersecurity platform for mid-market companies”—the model synthesizes answers from training data and web retrieval pipelines.

To appear in those responses, companies must optimize for AI discoverability.

The macroeconomic trajectory of the technology reinforces this shift. According to Grand View Research, the global market for Large Language Models is projected to reach $35.4 billion by 2030, expanding at a compound annual growth rate (CAGR) of 36.9% between 2025 and 2030. This rapid expansion reflects not only the proliferation of LLM-powered applications but also the emergence of LLM Optimization as a strategic discipline comparable to traditional search engine optimization (SEO).

Several technical factors influence LLM citation rates:

- 44.2% of AI citations come from the first 30% of page content, emphasizing front-loaded information architecture.

- Pages using structured schema markup experience 35–44% higher citation rates.

- Content updated within two months receives 28% more citations from AI crawlers.

Importantly, AI systems prioritize brand mentions over backlinks, with mentions correlating 3:1 stronger than links for citation visibility. This means the future of digital visibility depends not only on SEO rankings but also on cross-platform authority signals.

Strategic Trade-offs and Limitations

Despite the potential of AI for B2B Growth, organizations must navigate several trade-offs.

Data Quality Constraints

LLM systems are only as reliable as their underlying data.

Fragmented CRM records, inconsistent product documentation, or outdated market data can lead to inaccurate outputs.

Hallucination Risk

Even advanced Large Language Models occasionally generate plausible but incorrect responses. In high-stakes contexts such as pricing or contract negotiation, organizations often implement guardrails including:

- retrieval validation

- deterministic rules

- human-in-the-loop review

Organizational Adoption Challenges

Technology deployment is often easier than organizational change.

Research indicates that successful AI adoption requires:

- seller training programs

- change management initiatives

- incentive alignment across teams.

Without these measures, AI insights may remain unused despite technical availability.

How Retrieval Augmented Generation Improves Product Recommendation Accuracy in E-Commerce. More here!

The Future: Agentic AI in B2B Growth Engines

The next phase of LLM Optimization involves autonomous AI agents capable of executing workflows with minimal human supervision.

Rather than simply recommending actions, agentic systems can:

- identify potential prospects

- initiate outreach conversations

- schedule meetings

- refine targeting based on feedback loops

These systems represent the evolution from AI-assisted marketing toward AI-operated revenue engines. Organizations that successfully integrate these capabilities will likely achieve structural advantages in pipeline generation, operational efficiency, and market intelligence.

Conclusion

The rise of Large Language Models marks a structural turning point in B2B marketing.

Companies that deploy LLM Optimization strategically—integrating AI across data architecture, marketing operations, and discovery channels—can unlock powerful advantages in pipeline generation and revenue growth.

However, success requires more than simply adopting AI tools. It demands a comprehensive system that combines:

- robust data infrastructure

- retrieval-based knowledge architectures

- cross-platform brand authority

- continuous optimization loops

In short, AI in B2B marketing is no longer about automation—it is about building intelligent systems that continuously learn, adapt, and scale.

Organizations that treat AI for B2B Growth as an architectural capability rather than a tactical experiment will be the ones shaping the next generation of enterprise marketing.

Organizations seeking to operationalize LLM Optimization at scale require more than generic AI tools—they need production-grade architectures that integrate retrieval, knowledge management, and enterprise data pipelines. Platforms such as Makebot are advancing this capability through innovations like the HybridRAG framework, which improves enterprise document understanding by combining LLM reasoning with pre-generated QA knowledge bases for faster and more accurate responses over complex, unstructured data.

Showcasing Korea’s AI Innovation: Makebot’s HybridRAG Framework Presented at SIGIR 2025 in Italy. Read here!

This architecture enables organizations to deploy scalable AI systems that reduce latency, improve answer fidelity, and unlock high-value insights from internal knowledge assets.

👉 Start your AI transformation: www.makebot.ai

📩 Inquiries: b2b@makebot.ai

Why Generative AI Projects Fail and How to Achieve Scalable AI Success

.jpg)

.png)

_2.png)

.jpg)