Why Generative AI Projects Fail and How to Achieve Scalable AI Success

Most AI projects fail not from weak models, but from misaligned strategy, data, and execution.

Primary Keywords : generative AI project failure , why AI projects fail , AI implementation challenges , generative AI adoption , enterprise AI strategy , AI transformation success , scaling generative AI , AI project success factors

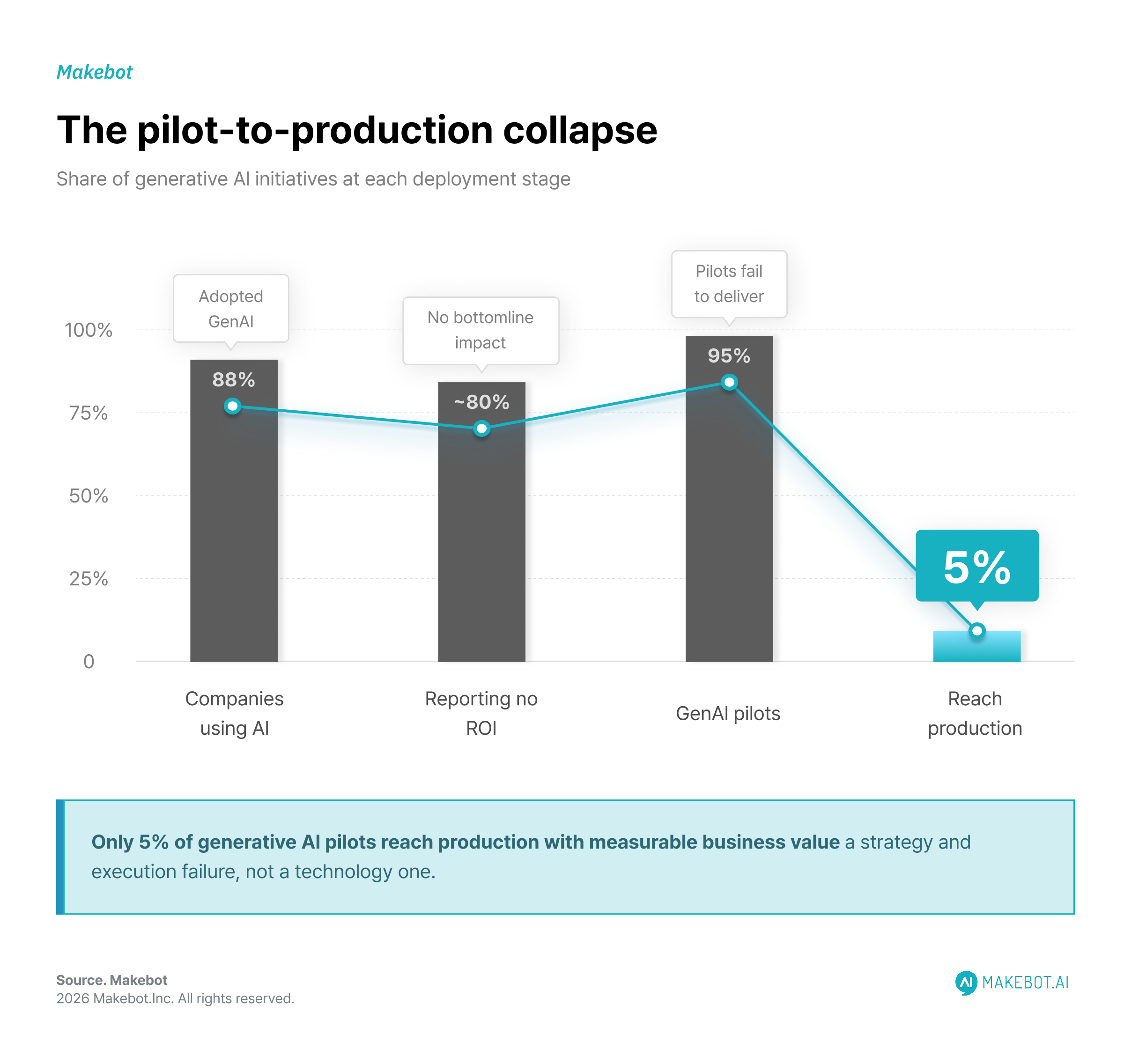

Generative AI has moved from experimentation to boardroom priority at unprecedented speed. Yet beneath the hype lies a stark reality: most organizations are not seeing meaningful returns.

Estimates consistently show that over 80% of AI initiatives fail to deliver measurable business value, with some studies indicating as many as 95% of generative AI pilots never reach production.

This gap between potential and performance is not a technology failure—it is a strategy, execution, and organizational problem.

This article dissects the real reasons behind bold generative AI project failure rates and outlines how organizations can systematically shift toward AI transformation success.

Why Most Enterprise Chatbot Projects Fail Before They Begin. Read more here!

The GenAI Paradox: High Adoption, Low Impact

The current state of generative AI adoption is paradoxical:

- 88% of companies use GenAI in at least one function (Mckinsey)

- Yet nearly 80% report no significant bottom-line impact (Mckinsey)

- Only 1% of organizations consider their AI strategy mature (Mckinsey)

- 95% of Generative AI pilots fail to deliver ROI (MIT Report)

- Just 5% of projects reach production with measurable value (Forbes)

At the macro level, the implications are massive:

- Generative AI has the potential to generate $2.6 trillion to $4.4 trillion in value across industries annually. (Mckinsey)

- Global AI spending is projected to hit $630 billion by 2028 (IDC)

- Yet 42% of companies abandoned most AI initiatives in 2025 (S&P Global)

This disconnect defines the modern AI landscape: massive investment, minimal realized value.

Why AI Projects Fail: Root Causes Across the Lifecycle

Understanding why AI projects fail requires looking beyond isolated technical issues and recognizing a broader pattern of systemic breakdowns across strategy, data, organizational alignment, and execution. Evidence across multiple studies shows that failure is rarely caused by model performance alone—instead, it emerges from compounding weaknesses across the entire AI lifecycle.

1. The “Science Experiment” Trap

A large share of AI initiatives never progress beyond experimentation, remaining stuck in what many analysts describe as “pilot purgatory.”

- 88–95% of AI pilots fail to reach production

- Many organizations run multiple proofs-of-concept (POCs) without a clear path to scaling

While these initiatives often demonstrate technical feasibility, they fail to establish business viability. The core issue is not capability—it is context.

Organizations frequently prioritize model performance, novelty, or experimentation over measurable outcomes. As a result, AI becomes disconnected from real workflows and decision-making processes.

Key insight: This pattern reflects a fundamental form of generative AI project failure—treating AI as an isolated innovation exercise rather than embedding it within an enterprise AI strategy tied to revenue, cost, or operational impact.

2. Poor Data Quality and Data Readiness

Data remains the most critical—and most underestimated—constraint in AI success.

- 85% of AI failures are linked to poor data quality or availability (Gartner)

- Only 12% of organizations have AI-ready data environments (MIT Sloan Survey)

- Less than 1% of enterprise data is actively used in AI models (IBM)

Even small degradations in data quality can have disproportionate effects. Research shows that just 20% data corruption can reduce model performance by ~10%, highlighting how sensitive AI systems are to data integrity.

The deeper issue is not simply “bad data,” but fragmented data ecosystems—disconnected pipelines, inconsistent governance, and lack of accessibility across teams.

Insight: AI performance is not bounded by algorithmic sophistication, but by data infrastructure maturity. Without strong data foundations, even the most advanced models fail to deliver reliable or scalable outcomes.

3. Lack of Business Alignment

Among the most persistent AI implementation challenges is the disconnect between AI initiatives and core business objectives.

- Only 15% of employees report clear AI strategy communication (Gallup)

- Fewer than 30% of organizations have active CEO-level sponsorship (Mckinsey)

This lack of alignment produces predictable outcomes:

- Disconnected and low-impact use cases

- Redundant experimentation across teams

- Solutions that fail to influence revenue, cost structures, or customer outcomes

Research consistently shows that misunderstanding or miscommunicating the problem being solved is one of the primary root causes of AI failure.

Core problem: Organizations often start with the question, “Where can we use AI?” instead of “What business problem are we solving?”

Why Generative AI Is a Key Component of a Responsible Business Model. More here!

4. Overreliance on Horizontal AI

Many enterprises default to general-purpose AI tools—such as copilots and chatbots—due to ease of deployment and accessibility.

- ~70% of Fortune 500 companies use horizontal AI tools (Mckinsey)

While these tools can improve individual productivity, their impact is typically diffuse and difficult to measure at the organizational level.

In contrast, vertical AI solutions—designed for specific industries or workflows—consistently deliver stronger financial outcomes. For example, domain-specific AI implementations have achieved up to 3.6× improvements in operational speed in real-world use cases.

Trade-off:

- Horizontal AI → fast adoption, low measurable ROI

- Vertical AI → slower deployment, high-impact, measurable outcomes

This reflects a broader failure pattern: prioritizing accessibility over applicability.

5. Organizational and Cultural Barriers

AI failure is often rooted in human and organizational dynamics rather than technical limitations.

- 40% of employees require reskilling to work effectively with AI (IBM)

- 52% of individuals express concern about AI, compared to only ~10% excitement (PEW Research Center)

- 45% of employees report change fatigue (Forbes)

These dynamics create resistance at multiple levels:

- Lack of trust in AI-generated outputs

- Fear of job displacement

- Low adoption due to unclear value or usability

Moreover, cultural misalignment can actively undermine implementation—employees may bypass or reject systems entirely, even when technically sound.

Conclusion: AI systems do not fail in isolation—they fail when organizations fail to adopt them.

6. Lack of AI Talent and Expertise

Even with strong strategic intent, many organizations lack the internal capabilities required to execute AI initiatives effectively.

- A 50% AI talent gap is projected in the coming years (Thomson Reuters)

- Up to 70% of employees will require upskilling to work with AI systems

This gap affects every stage of the lifecycle:

- Poor model selection and evaluation

- Weak data pipeline design

- Inadequate deployment and monitoring strategies

Without experienced practitioners, organizations often underestimate complexity and overestimate readiness—resulting in fragile systems that fail under real-world conditions.

7. Unrealistic Expectations and ROI Timelines

AI is frequently approached with unrealistic expectations shaped by vendor promises and market hype.

- Expected ROI timelines: 7–12 months

- Actual timelines for meaningful ROI: 2–4 years

At the same time:

- Early-stage enterprise AI delivers only ~5.9% ROI on initial investments (IBM)

This mismatch leads to premature abandonment of initiatives—often just as foundational capabilities begin to mature.

Implication: The issue is not lack of value, but lack of patience and strategic commitment.

8. Infrastructure and Integration Failures

The transition from pilot to production exposes the most critical weaknesses in AI systems.

Common challenges include:

- Legacy systems not designed for AI-scale workloads

- Fragmented or incompatible data pipelines

- Lack of integration with core enterprise systems (ERP, CRM, operations platforms)

Without deep integration, AI remains an isolated layer—unable to influence real business processes or decisions.

Research shows that integration—not model performance—is the primary dividing line between successful and failed AI deployments.

Result: What appears as “pilot success” collapses into “production failure” when systems encounter real-world complexity.

Accenture: Companies with AI-led Processes Outperform Peers by 2.5x in Revenue Growth. Continue reading here!

The Hidden Pattern: From Pilot Success to Scaling Failure

Across industries, AI initiatives tend to fail along a predictable trajectory—yet this same pattern also reveals where measurable improvements emerge when execution is done correctly. In the proof-of-concept (POC) stage, projects often rely on clean, curated datasets and operate under unrealistic assumptions that don’t reflect real-world conditions, even though successful implementations typically require 12–18 months to demonstrate measurable business value rather than quick wins .

As they move into the pilot phase, deeper organizational issues emerge—particularly siloed teams and weak alignment between business, IT, and data functions—which limit the project’s ability to demonstrate meaningful value. This is a widespread issue, with 62% of organizations struggling with data governance and cross-functional coordination (KPMG).

However, organizations that overcome these barriers begin to see tangible operational gains, including 20–40% faster task completion and average time savings of 2–3 hours per employee, demonstrating that early-stage improvements are achievable when alignment is addressed .

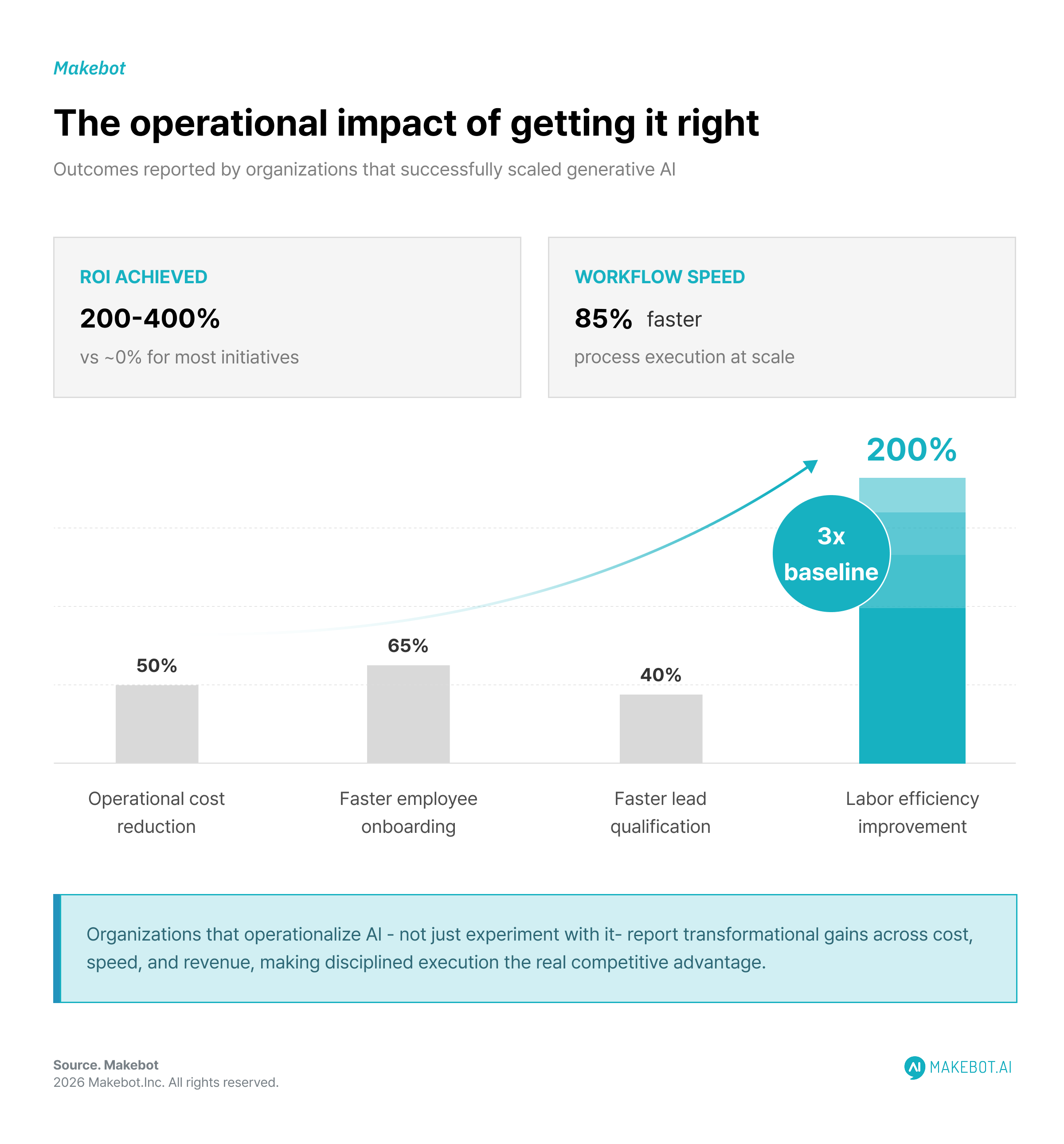

By the time projects reach the production stage, technical and operational challenges take over, including system integration issues, difficulties in scaling infrastructure, and gaps in change management. Yet this is also where the performance gap between failure and success becomes most visible. Organizations that successfully scale generative AI report 200% improvements in labor efficiency, 50% reductions in operational costs, and up to 85% faster process execution, alongside measurable gains in customer experience and revenue impact .

This pattern highlights a critical insight: the real challenge is not building AI systems, but successfully scaling generative AI across the organization to unlock sustained, compounding business value.

What Separates the 5% That Succeed?

Despite widespread failure, a small group of organizations consistently achieve AI project success factors that translate into real, measurable ROI. These organizations are not just experimenting—they are operationalizing AI at scale.

- Successful implementations generate 200–400% ROI, compared to zero or negative returns for most initiatives

- Organizations report 85% faster workflows, 50% lower operational costs, and 65% faster onboarding processes

- In customer-facing functions, AI delivers 40% faster lead qualification and ~10% improvements in customer retention

More importantly, these gains are not isolated—they compound over time. Early-stage improvements of 5–10% efficiency gains often scale into transformational impact as systems mature and integrate across workflows .

These organizations differ in one fundamental way: they treat AI not as a tool, but as an integrated system of business transformation.

How to Achieve AI Transformation Success

Achieving AI transformation success requires a fundamental shift in how organizations approach AI—not as isolated experiments, but as integrated systems of business execution. The organizations that succeed in scaling generative AI are not necessarily those with the best models, but those with the strongest alignment between strategy, data, and operations.

1. Start with Business Value, Not Technical Possibility

The most effective organizations treat AI as a lever for solving economically meaningful problems. Instead of asking what AI can do, they define what the business needs to achieve—then design AI around those outcomes.

This shift reframes AI from a capability-driven initiative into a value-driven one. It ensures that every deployment contributes directly to performance, rather than existing as a disconnected innovation effort.

2. Build an Execution-Oriented Enterprise AI Strategy

A strong enterprise AI strategy is not a roadmap—it is an operating model. It aligns leadership, standardizes decision-making, and enables reuse across teams.

Rather than allowing fragmented experimentation, leading organizations create shared systems, governance layers, and reusable components that accelerate deployment while maintaining consistency. This is what allows AI to scale beyond isolated use cases and become a cross-functional capability.

3. Treat Data as Infrastructure, Not Input

One of the most overlooked AI project success factors is how organizations conceptualize data. High-performing teams do not treat data as something to feed into models—they treat it as infrastructure that must be continuously managed, refined, and governed.

This includes building pipelines that reflect real operational conditions, ensuring accessibility across teams, and maintaining quality over time. Without this foundation, even well-designed AI systems remain fragile and difficult to scale.

4. Focus on Contextual Integration, Not Generic Capability

The real value of AI emerges when it is embedded within specific workflows. Generic tools may accelerate individual productivity, but they rarely transform how the business operates.

In contrast, AI systems that are tailored to domain-specific processes—whether in operations, finance, or customer experience—create measurable and sustained impact. The difference lies in integration: AI must be part of the workflow, not an overlay on top of it.

5. Design for Scale from the Beginning

Many organizations build AI solutions that work in controlled environments but fail under real-world complexity. This is not a technical limitation—it is a design flaw.

Successful teams approach AI development with production in mind from the outset. They consider how systems will integrate, how they will be maintained, and how they will evolve over time. Scaling is not a phase—it is a requirement.

6. Align People, Not Just Systems

AI adoption is ultimately a behavioral challenge. Even the most advanced systems fail if they are not trusted, understood, or embedded into daily work.

Organizations that succeed invest in capability building, clear communication, and change management. They make AI usable, relevant, and aligned with how teams actually operate—turning resistance into adoption and adoption into value.

7. Scale Through Compounding Improvements

Transformation does not happen through a single breakthrough. It happens through a sequence of validated improvements that expand over time.

Leading organizations focus on building momentum—starting with targeted use cases, proving value, and systematically extending capabilities across functions. As systems mature and integrate, the impact compounds, turning incremental gains into structural advantage.

8. Combine Internal Context with External Execution

One of the most underestimated AI implementation challenges is execution. Building scalable AI systems requires both deep domain knowledge and experience in deployment.

Organizations that succeed recognize this and avoid relying solely on internal capabilities. By combining internal expertise with external implementation experience, they accelerate progress, avoid common pitfalls, and increase the likelihood of long-term success.

McKinsey Report: How Generative AI is Reshaping Global Productivity and the Future of Work. Read more here!

The Real Lesson: AI Failure Is a Strategy Failure

The dominant narrative frames generative AI project failure as a technology problem. The evidence suggests otherwise.

Failures stem from:

- Poor strategic alignment

- Weak data foundations

- Organizational silos

- Cultural resistance

Meanwhile, successful organizations treat AI as:

- A business transformation initiative

- A multi-year capability investment

- A cross-functional system, not a tool

Final Thought: From Hype to Discipline

The next phase of generative AI adoption will not be defined by who experiments fastest—but by who executes best.

The organizations that win will:

- Resist hype-driven deployment

- Build disciplined, data-centric strategies

- Focus relentlessly on measurable outcomes

In a landscape where 80% fail, success is no longer about adopting AI—it is about operationalizing it at scale.

And that is the real competitive advantage.

.jpg)

.png)

_2.png)

.jpg)